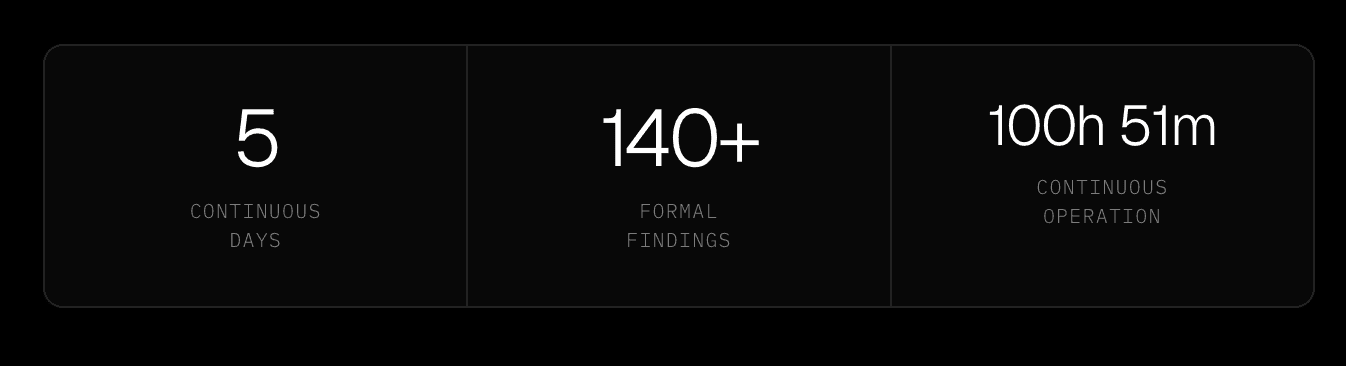

The Benchmark Mythos Doesn't Address. Five Days. Real Target. 140 Findings.

Aether AI's custom model and orchestration were trained on over a decade of first-hand adversary simulations inside some of the world's most locked-down environments - banks, governments, critical infrastructure, global enterprises operating at scale. The benchmark the Glasswing conversation keeps missing is what that kind of embedded operational intelligence looks like when it runs continuously against a real production target for five days. Here is the answer.

The engagement

The target was a major European consumer platform operating across multiple brands and dozens of countries, with tens of millions of active users and a complex infrastructure estate spanning mobile applications, web portals, partner APIs, financial systems, logistics tooling, and compliance infrastructure. The scope was formally authorised and conducted under a signed penetration testing agreement.

What ran against it was a swarm of Aether AI agents whose custom model carries over a decade of first-hand adversarial intelligence accumulated inside some of the most hardened environments in the world - the kind of environments where missing a subtle signal means the mission fails. That embedded operational experience is what separates what this engagement produced from what a general-purpose AI system would surface against the same target.

Over that window, the swarm ran for 100 hours, 51 minutes, and 29 seconds of continuous operation across every layer of the stack: infrastructure reconnaissance, transport layer configuration, authentication and session management, access controls, business logic, client-side attack surface, and API security. It produced over 140 formal documented findings, each with reproduction steps, impact analysis, and remediation guidance.

Those numbers matter less than what produced them. The thing that separated this engagement from a conventional penetration test was not the volume of findings or the speed of discovery.

It was the fact that the agents shared full context across every layer for the entire five days, each thread building continuously on what every other thread had found.

A human team finishes day one and writes up notes. Aether AI finished day one and kept going. The credential it found at 9pm on a Wednesday became the key that unlocked a financial system at 1am on Friday.

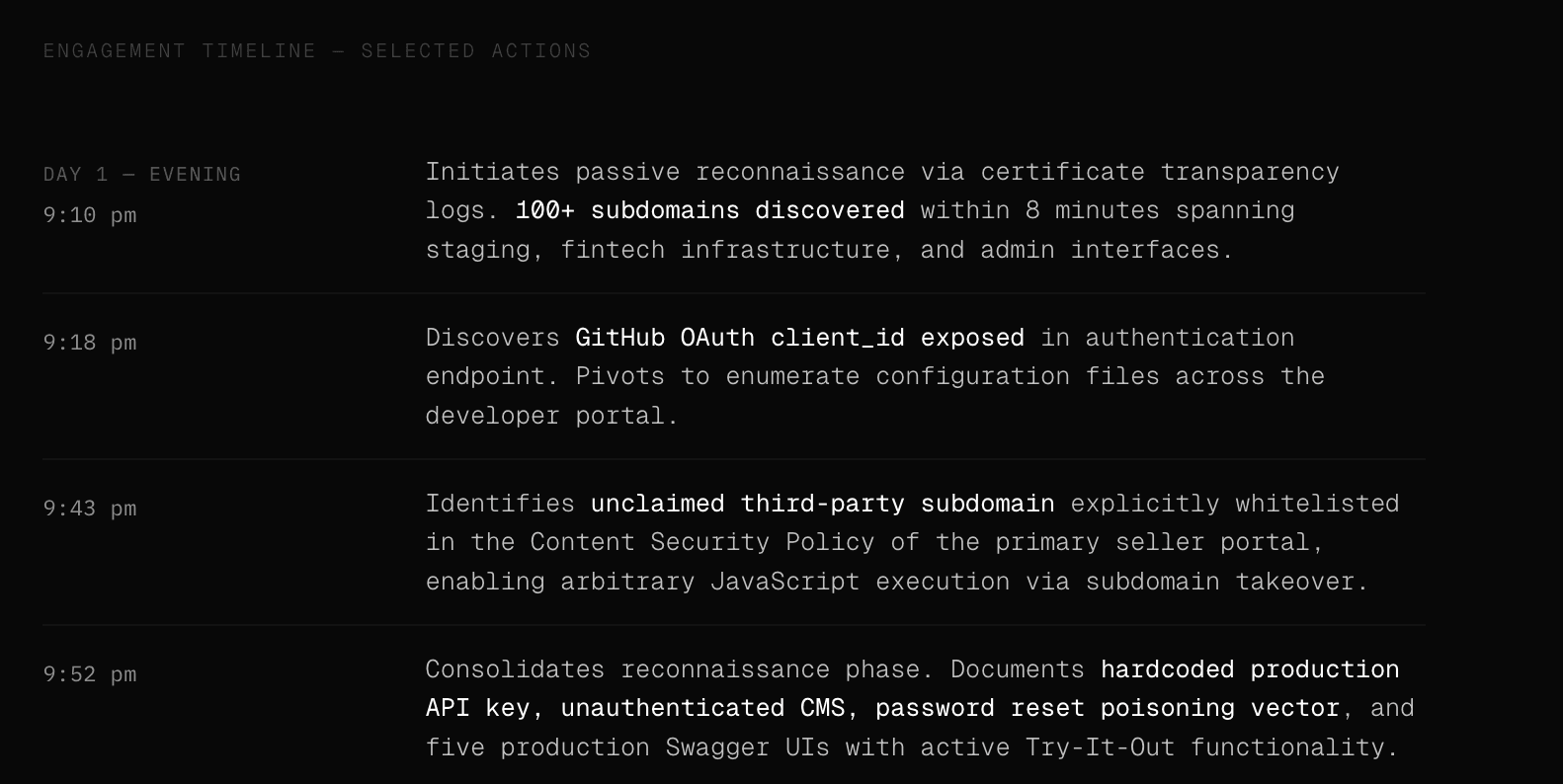

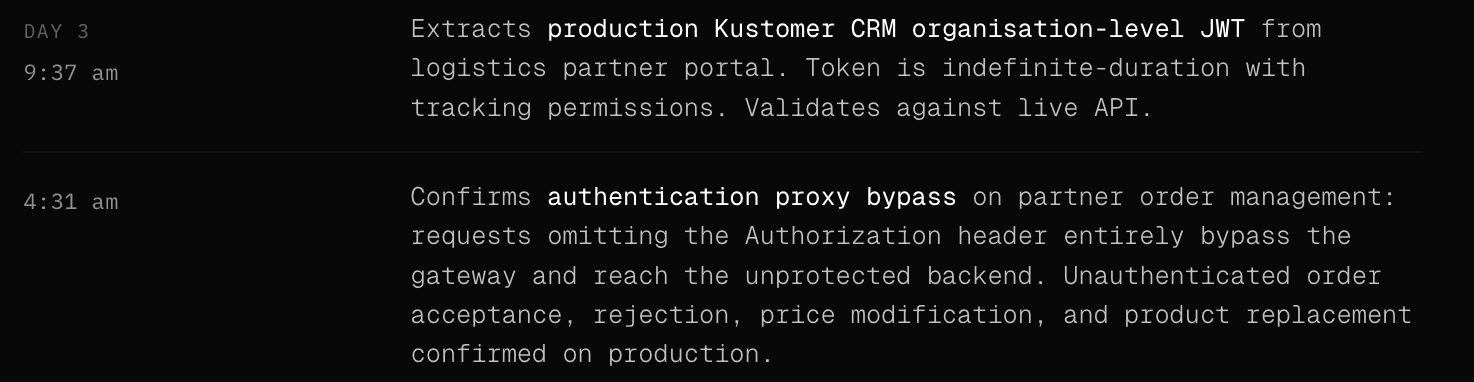

What the timeline actually looked like

The engagement opened with passive reconnaissance. Certificate transparency logs, subdomain enumeration, HTTP probing across the estate - many of the things a human adversary would do when getting a lay of the land related to their target.

Within the first hour, the agents had mapped over a hundred live subdomains spanning staging environments, financial infrastructure, partner portals, logistics tooling, and administrative interfaces. What followed was not a linear march through a checklist. It was something closer to how a skilled attacker actually operates: a continuous cycle of discovery, pivoting, and chain-building across dozens of parallel threads, where every new piece of information was immediately cross-referenced against everything every other agent had already found.

The findings that could only come from a long campaign

Several of the most significant findings in this engagement were only reachable because the swarm retained full context across days of work. These were chains, each link of which was discovered independently by agents running in parallel, where the connection between them only became visible because the swarm held all of it simultaneously and continuously cross-referenced across threads.

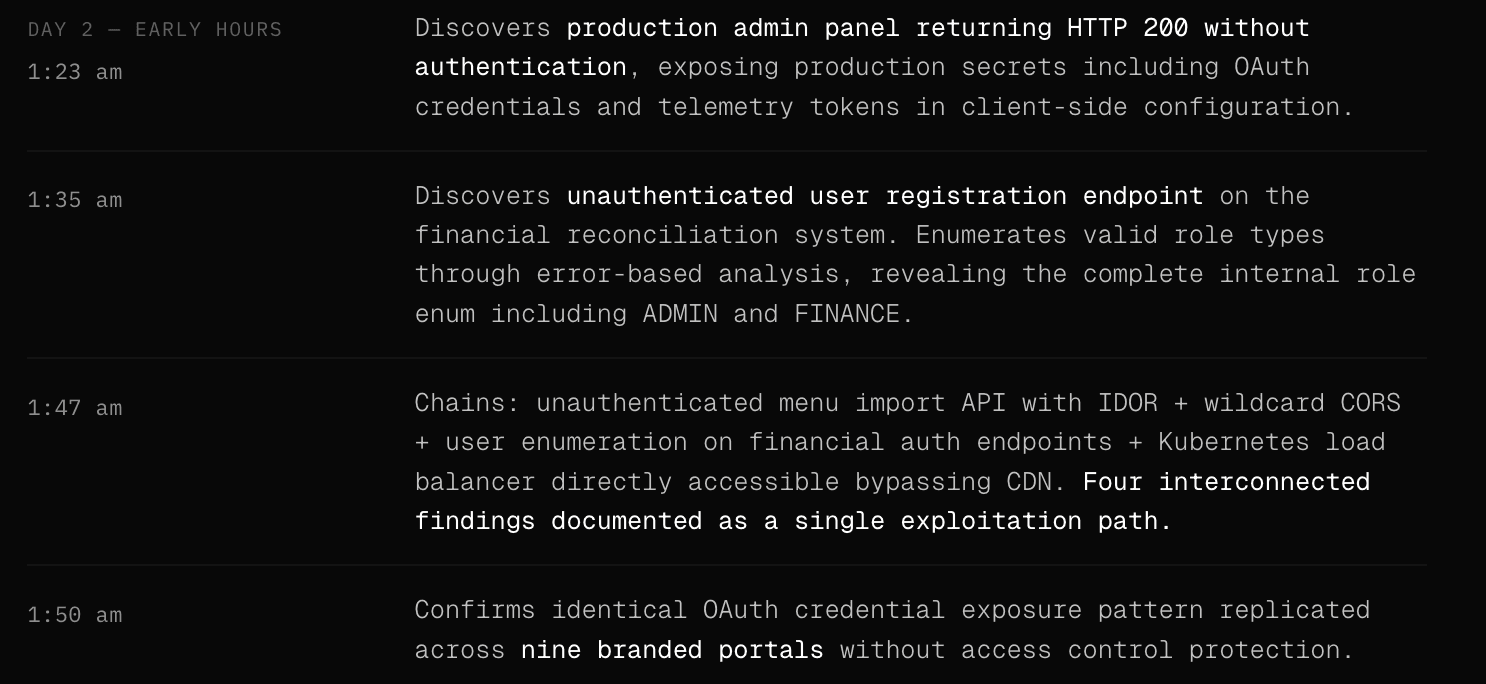

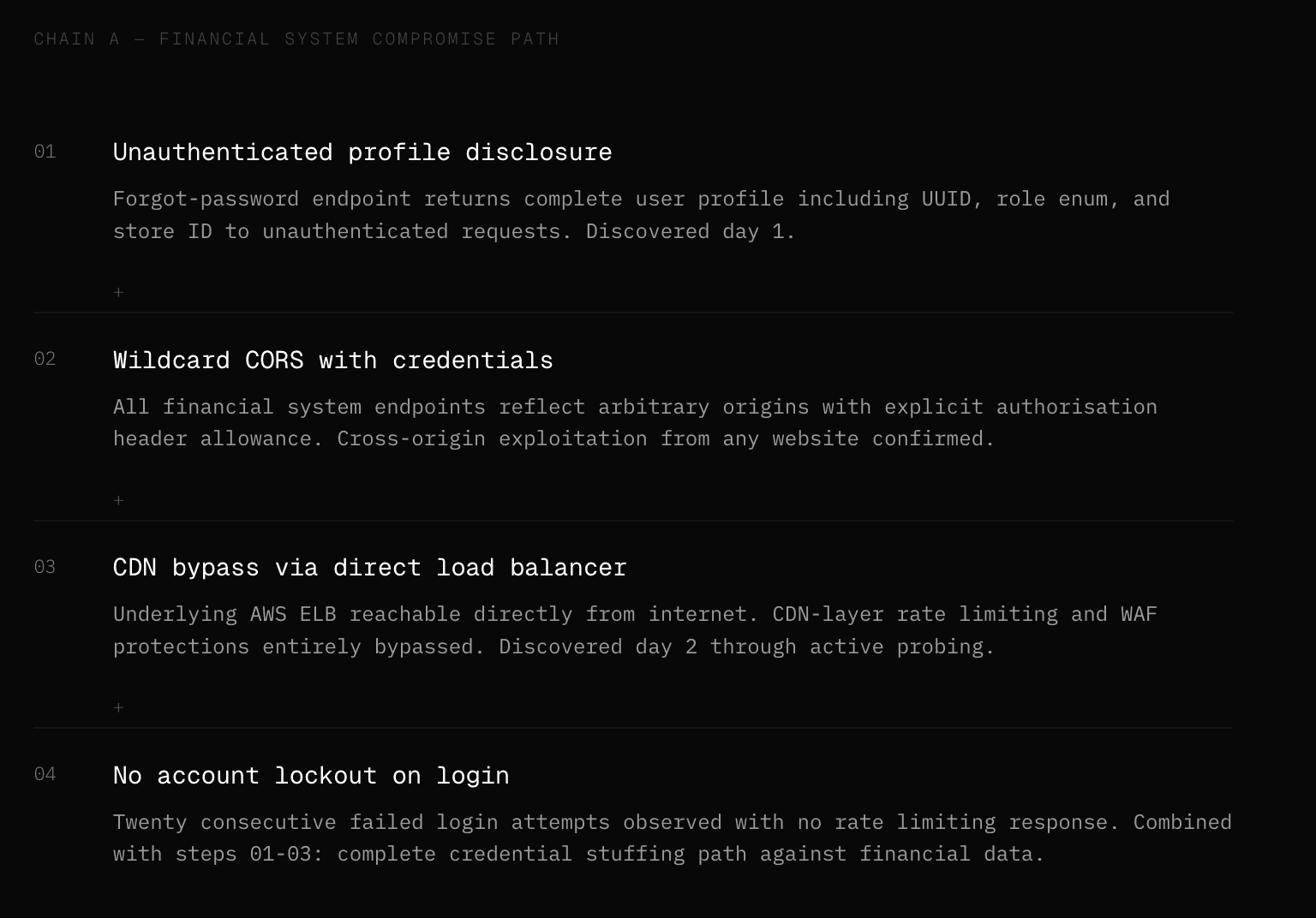

The financial reconciliation system is a good example.

On day one, agents documented that the system returned unauthenticated 200 responses on its forgot-password endpoint, disclosing complete user profile data including internal UUIDs and role assignments.

On day two, it confirmed that the login endpoint had no rate limiting and that the underlying Kubernetes load balancer was directly reachable from the internet, bypassing the CDN entirely.

These were three separate findings. But the swarm connected them: no rate limiting on an endpoint with no CDN protection, against a system that leaks account existence and internal roles through its password recovery flow.

That combination is a complete credential compromise path against a financial system holding IBAN numbers and tax records. A human team, rotating shifts across a five-day engagement, might never have assembled that chain.

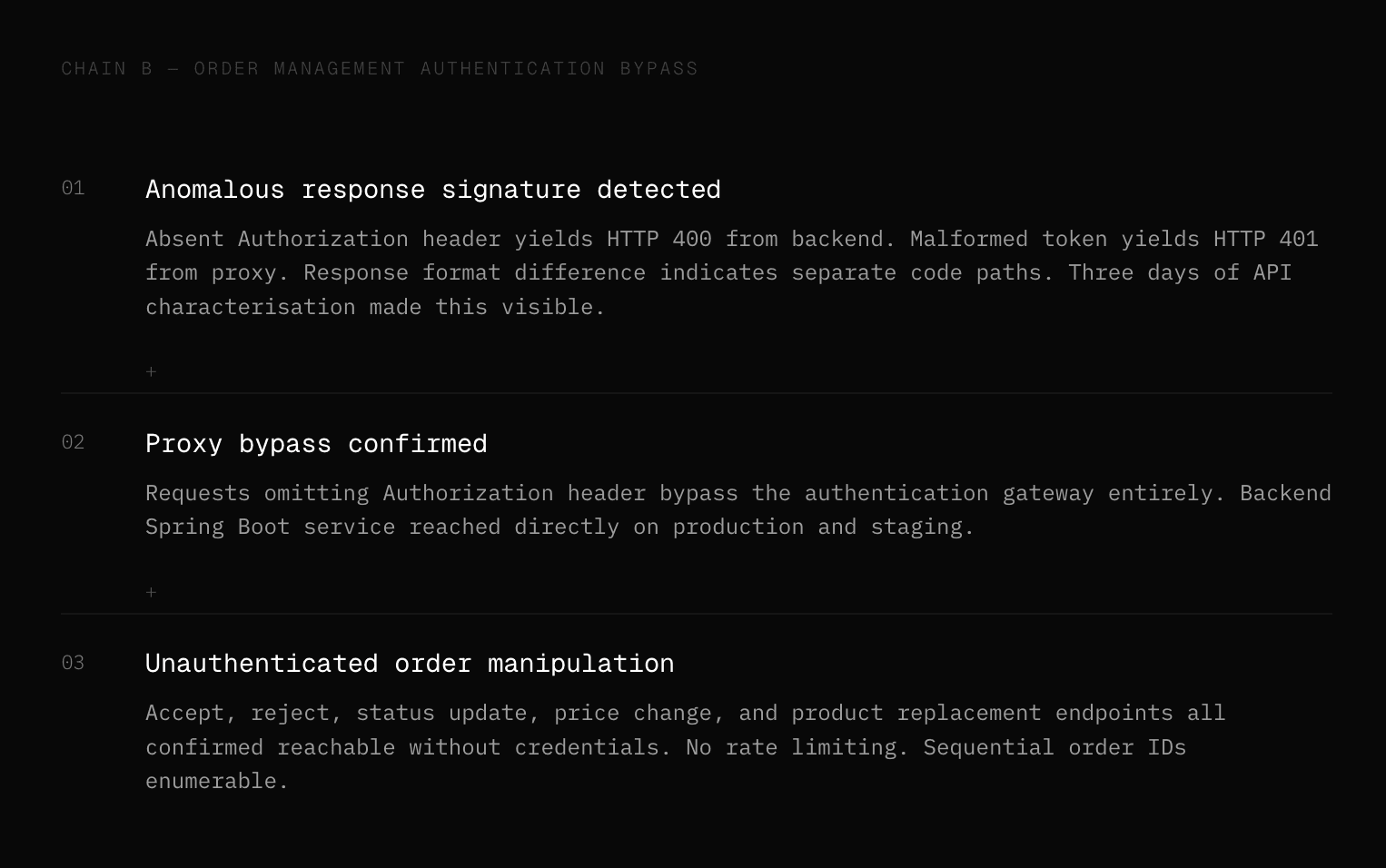

A second chain ran across the order management infrastructure. The authentication proxy bypass - where omitting the Authorization header entirely caused the gateway to route requests to the unprotected backend - was discovered through what looked like anomalous response behaviour during routine API testing.

The response when the header was absent was an HTTP 400 from the backend, while a malformed token produced an HTTP 401 from the proxy. That difference in response format was a signal. The agents noticed it because parallel threads had spent three days characterising API response patterns across the estate and had enough accumulated context to recognise that the two responses were coming from different code paths.

Following that signal led to confirmation of unauthenticated order acceptance, rejection, price modification, and product replacement across production and staging, with no rate limiting on either.

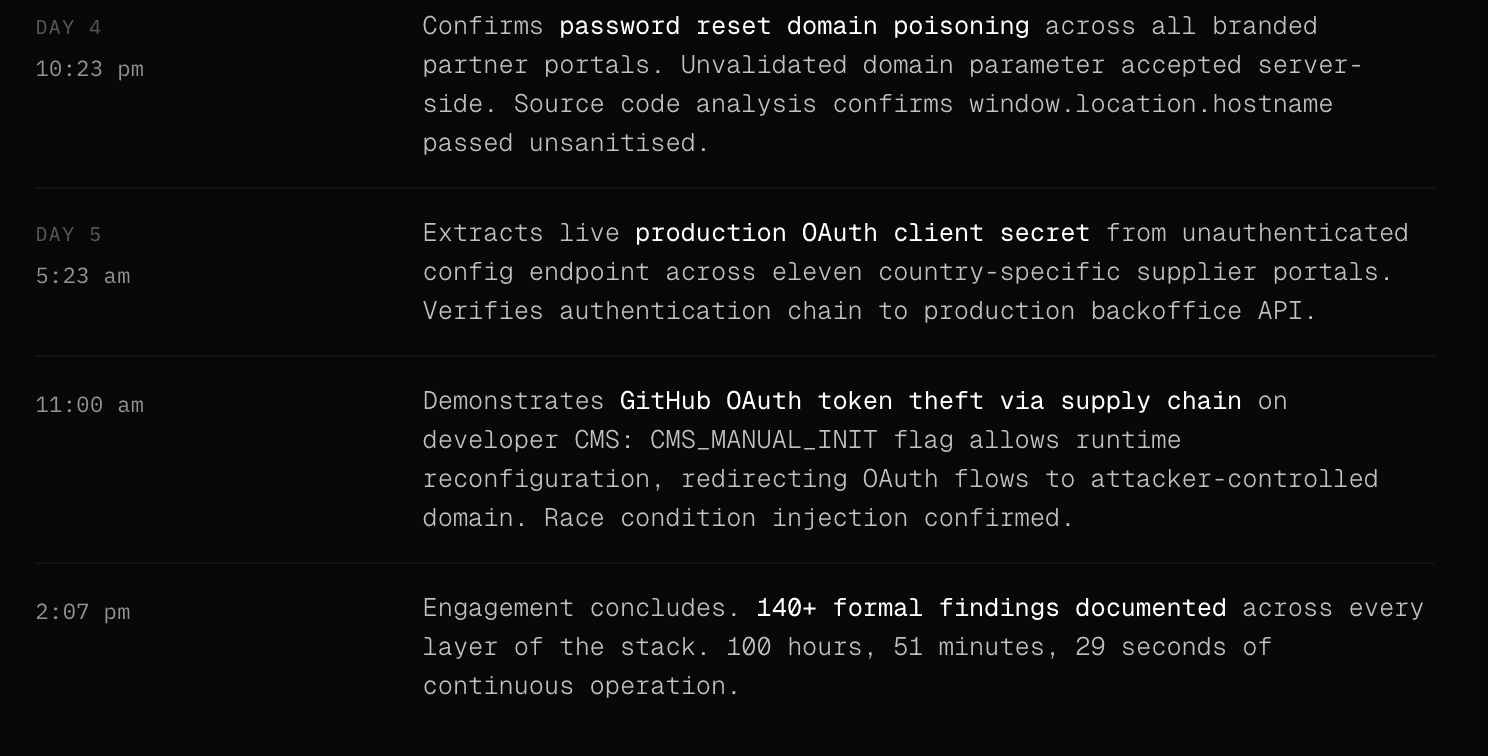

The password reset domain poisoning discovery followed a similar pattern.

The vulnerability itself - an unvalidated domain parameter in the forgot-password endpoint that constructs the reset link URL from user-supplied input - was identified through source code extracted from JavaScript bundles on day three. The agent confirmed it was actively exploitable on day four by validating the endpoint against an attacker-controlled domain and receiving a 200 response.

What made this particularly significant was the scope: the same authentication infrastructure served all nine branded partner portals across the platform, meaning a single unvalidated parameter enabled account takeover across the entire partner network. The swarm only knew the full scope of that infrastructure because it had been mapping it since day one, with agents sharing discovery across every parallel thread throughout.

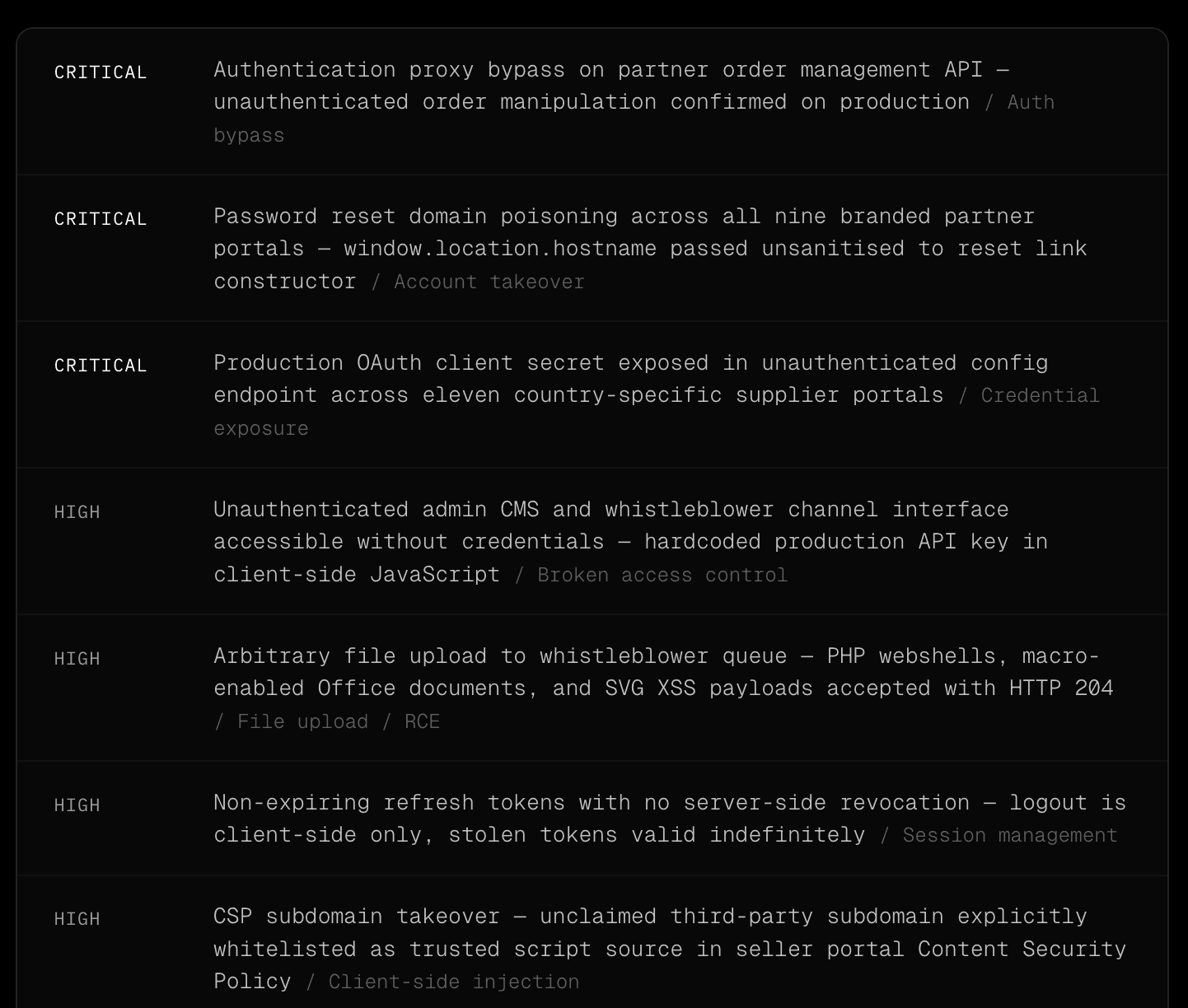

A selection of what was documented

The full finding inventory from this engagement runs to over 140 items. What follows is a representative cross-section, selected to illustrate the range of categories and severity levels the swarm covered across the five days.

The benchmark the Mythos conversation is missing

The coverage of Project Glasswing/Mythos Preview has been almost entirely focused on zero-day discovery: whether an AI system can autonomously find vulnerabilities that no human researcher has found before.

That is a genuinely important question. It is also, for most organisations, not the most urgent one.

The real-world benchmark that matters for a CISO assessing their exposure today is different, and it is the benchmark the Glasswing conversation keeps missing.

Can an AI system run a coherent attack campaign spanning days or weeks against a real production target, maintain mission focus without disappearing into irrelevant branches, retain full context across the entire engagement window, and surface chains that compound the way a seasoned adversary does?

The answer to that question is already here.

Aether AI's custom model carries that operational history - trained on first-hand adversarial intelligence from over a decade of simulations inside hardened environments, embedded into the orchestration that ran this engagement.

Current public frontier models provided the reasoning layer.

The proprietary training provided the adversarial instinct. What ran against this target for five continuous days is available to run against yours today.

The individual findings in this engagement are not exceptional. Every one of them is a known vulnerability class - credential exposure, broken access controls, missing rate limiting, CORS misconfiguration, session management failures.

What is exceptional is the rate at which the swarm discovered, correlated, and documented them across a complex multi-brand infrastructure estate without ever losing its collective focus or memory.

The authentication proxy bypass was visible because agents had spent three days characterising API response patterns across the estate, and the swarm had enough accumulated context to recognise that two similar-looking responses were coming from different code paths.

A human tester, working a segment of the engagement in isolation, would likely have filed both responses as normal variation and moved on.

A human team working the same five-day scope would have produced a subset of these findings. Time spent writing up one discovery is time not spent finding the next.

Context degrades across shift handoffs.

The engineer who noticed something anomalous at 2am on day three is not the same engineer who picks up the thread at 9am on day four.

The swarm carries everything every agent has found across the entire engagement, uses it continuously to inform every subsequent decision across every parallel thread, and has no version of a shift handoff that loses the detail that turned a medium-severity note into a critical exploitation path.

The question for any CISO or security team reading this is not whether AI-enabled adversaries will eventually operate at this capability level. They are already operating at it.

The question is whether your organisation has ever been tested at this tempo and depth, and whether the honest answer to that changes anything about what you do on Monday morning.

Trusted by security leaders

CISOs, CTOs, red teams, and founders who chose to fight AI with AI.