While Everyone Watches Glasswing, Attackers Are Walking Through Your Front Door.

This week, Anthropic announced Project Glasswing and Claude Mythos Preview - a frontier AI model that has already autonomously discovered thousands of zero-day vulnerabilities across every major operating system and browser.

The announcement rattled markets. Cybersecurity vendor shares fell between 5% and 11% in a day.

Everyone started asking the same question: what does AI-powered zero-day discovery mean for the threat landscape? We have a different question. When did zero days become the threat you were supposed to be worried about?

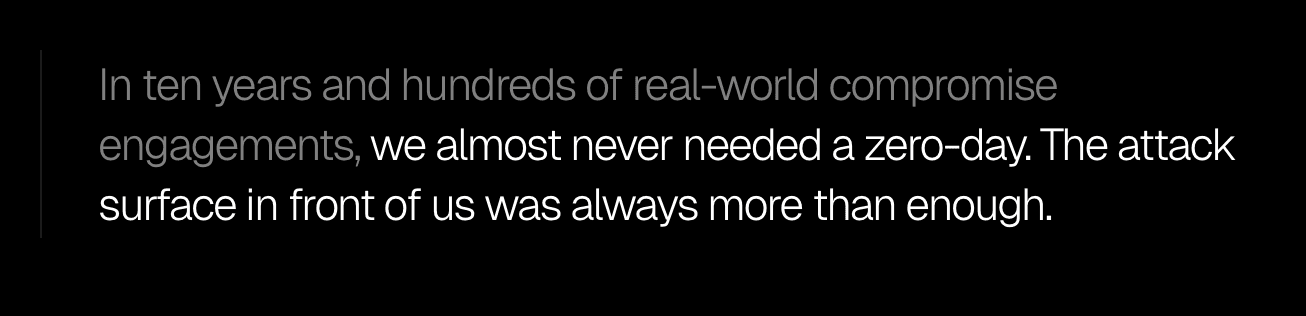

We have spent over ten years gaining authorised access to hundreds of organisations - banks, governments, critical infrastructure, global enterprises. We have been inside networks that spent millions on perimeter security, endpoint detection, threat intelligence feeds, and vulnerability management programmes. We have walked through the front door of environments that were, on paper, hardened against exactly the kind of sophisticated attack that the Glasswing announcement implies is coming.

In those ten years, across hundreds of engagements, the number of times we needed a zero-day vulnerability to achieve our objective was vanishingly small - and in every case where one was available, the same objective could have been reached through the misconfiguration, the credential, or the unpatched known CVE sitting right next to it. Zero-days exist and matter, but they are not what is driving the overwhelming majority of real-world compromise.

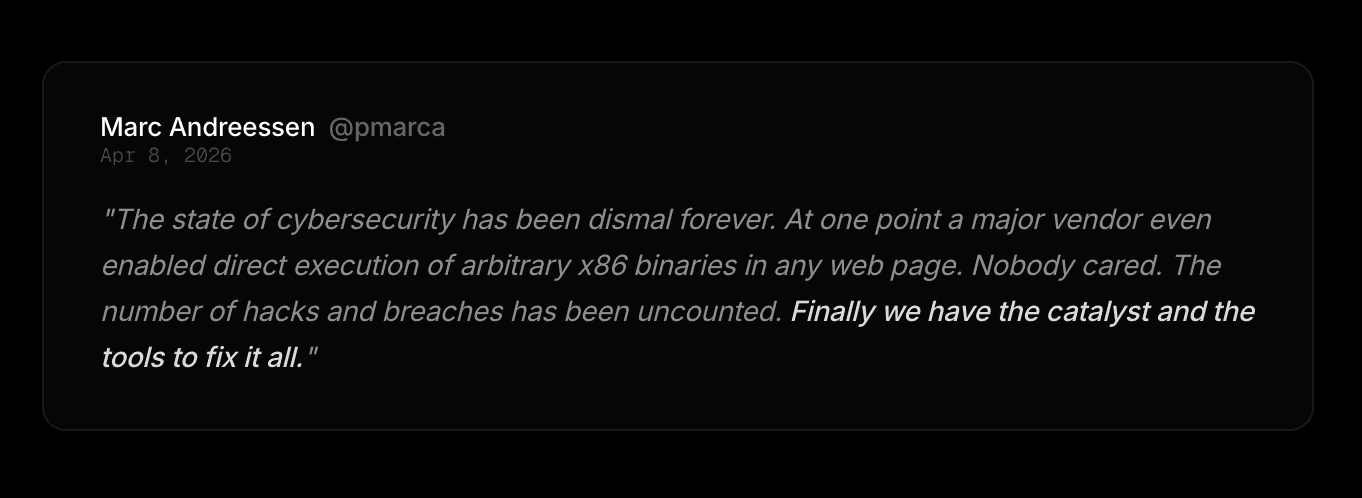

Marc Andreessen made a similar observation this week, and while the analogy was colourful, the underlying point is correct.

The organisations spending this week in emergency board meetings about AI-powered zero-day discovery are the same organisations that have not audited their cloud IAM policies in eighteen months, whose developers are committing credentials to public repositories, whose mobile applications have never been tested on the iOS version their users are actually running, and whose identity infrastructure is configured with the defaults that shipped in 2019 and have never been reviewed since.

He is right that AI gives us the catalyst and the tools. Where the conversation needs to go next is what we actually fix with them - and the answer, based on everything we have seen across a decade of real-world offensive work, is not primarily a zero-day problem.

What actually gets organisations compromised

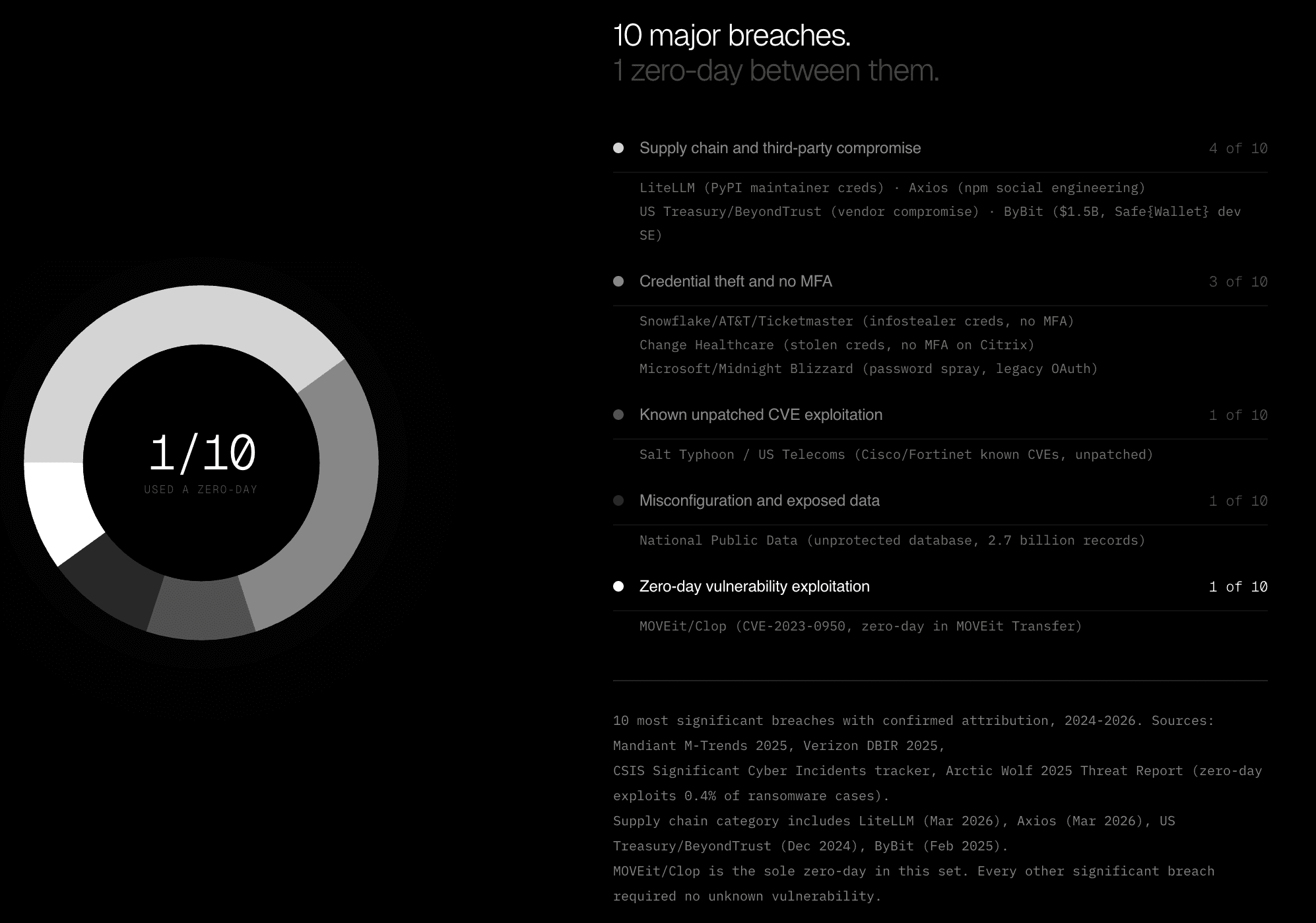

Rather than pointing to our own engagement data in isolation, consider the ten most significant breaches of the last two years where attribution is confirmed and the initial access vector is publicly documented - household names, billions of records exposed, combined financial impact in the hundreds of billions of dollars, and a grand total of one zero-day between all of them.

The chart below maps each of those ten attacks to its confirmed initial access vector. Supply chain and third-party compromise accounts for four of the ten - LiteLLM and Axios in March 2026, where attackers compromised maintainer credentials and pushed malicious package versions to a combined 200 million weekly installs without a single exploit, and ByBit in February 2025, where $1.5 billion in Ethereum was stolen via social engineering against a Safe{Wallet} developer.

Credential theft with no MFA accounts for three more - Snowflake, which cost AT&T and Ticketmaster hundreds of millions in breach costs via infostealer-harvested credentials against accounts with no multi-factor enforcement, Change Healthcare, where a stolen credential on an unprotected Citrix portal brought down US healthcare billing infrastructure for months, and Midnight Blizzard's breach of Microsoft via password spray against a legacy OAuth application.

Then a known unpatched CVE in Cisco and Fortinet devices that Salt Typhoon used to sit inside US telecoms infrastructure for months undetected, and an unprotected database at National Public Data that exposed 2.7 billion records to anyone who knew to look for it.

And then there is MOVEit - the one genuine zero-day in the set, exploited by Clop before a patch existed and responsible for genuine damage at scale, which earns its place on the chart as an honest counterexample rather than something to be explained away.

Nine out of ten of the most significant, most damaging, most widely covered cyber attacks of the last two years required no zero day vulnerabilities.

They required a compromised maintainer account, a credential harvested by an infostealer, a Citrix portal without MFA, a developer targeted with a convincing social engineering campaign, a known CVE that an organisation never got around to patching, a database left exposed because nobody checked.

These are not obscure attack classes. They are the same classes that have dominated breach data for a decade, and they are the classes that AI-powered attack capability - including the AI our own agents use - makes dramatically more exploitable at scale.

What Aether AI is already chaining across your full stack

This is not a theoretical argument about what AI might be able to do. Aether AI is doing it right now, today, using current public frontier models alongside our own custom model trained on a decade of proprietary offensive research, running through our own orchestration harnesses that we have built and refined through real engagements.

The capability that the industry is watching Glasswing for - autonomous, multi-step attack chains across complex environments - is something we have been demonstrating in production engagements for months.

What that looks like in practice is not a single clean exploit against a single target. It looks like an agent that starts on a mobile application, identifies an unvalidated deeplink handler, traces the OAuth callback chain through to a server-side request forgery vulnerability in the API layer, pivots from there into an internal service that was never meant to face the internet, and surfaces the full chain with proof of exploitation at every step - across mobile, API, web, and cloud infrastructure in a single continuous engagement.

The agent holds the full context of the engagement in memory and reasons across every layer the way a skilled human attacker would, without the constraint of needing to stop, sleep, or hand off to someone else.

We have seen this in SSRF chains that reach internal metadata services through API endpoints that looked innocuous in isolation, in mobile applications where a business logic flaw in the iOS layer unlocked an authentication bypass in the backend that no web-focused test had ever reached, and in cloud environments where a misconfigured IAM role discovered passively during a web application test became the pivot point for lateral movement across the entire account.

The chains exist because modern application infrastructure is interconnected, and the only way to find them reliably is to test across the full surface simultaneously with an agent that carries context across every layer.

What AI-powered attack capability actually means for your exposure

The Glasswing announcement matters, and Claude Mythos Preview finding zero-day vulnerabilities autonomously is a real development whose long-term implications for the threat landscape are serious enough that Anthropic was right to treat it as something requiring coordinated industry response - but the capability it represents is not the question that most CISOs should be prioritising right now.

The question is what the actual near-term threat to your organisation looks like, and the answer is that AI-powered offensive capability applied to the categories in that chart is the thing that should be keeping your security team occupied.

Zero-day discovery matters, and the gap between your current exposure across credentials, supply chain, misconfigurations, and cloud drift is widening far faster than any zero-day gap - and it is widening against adversaries who are already using AI to exploit it.

An attacker with access to a capable AI system and no zero-day capability whatsoever can now enumerate your entire external attack surface, identify every misconfigured cloud resource, map every identity provider that does not enforce MFA, find every API endpoint with weak authorisation, discover every mobile application deeplink handler with an unvalidated redirect, and chain those findings together into a compromise path with a thoroughness and speed that no human team could match. That capability exists today, in models that are already available, against attack surface that most organisations are not managing well enough to withstand it.

Aether AI was built around the enormous, underexplored, systematically unaddressed fabric of exposure that exists in every organisation - the misconfigurations, the identity gaps, the cloud drift, the mobile blind spots, the API surface that nobody has looked at comprehensively since the application was built.

The attack surface that skilled adversaries have always been able to exploit with patience, and that AI now makes exploitable at machine speed with the kind of contextual reasoning that surfaces the chains that neither human testers nor traditional scanners ever connected.

Project Glasswing is an important initiative and the industry needed the signal it sends. But the question for any CISO trying to figure out what to do this week is not about what Mythos-class models will eventually enable - it is about how your organisation stands up right now against an attacker who is already using AI to chain misconfigurations, stolen credentials, exposed APIs, and mobile vulnerabilities into full compromise paths across your stack, using models that have been publicly available for months. That attacker is not waiting for Glasswing to ship. They are already running.

Aether AI gives you the same capability on your side of the equation, today. If you want to know whether an active, AI-enabled adversary currently has an easier path through your environment than your last pen test suggested, that is exactly the question we are built to answer.

Trusted by security leaders

CISOs, CTOs, red teams, and founders who chose to fight AI with AI.