Attackers Accumulate. Defenders Reset. And why you need to change.

6

Every serious attacker keeps notes. They find something in January, get pulled away, and come back in March - picking up exactly where they left off. Your last pen test ended when the consultant closed their laptop. That asymmetry has always existed. It has shaped everything about how defenders operate, what they can see, and what they consistently miss. Aether was built to close it.

Think about what a skilled attacker actually does.

They are building a picture, slowly and deliberately, across days and weeks and sometimes months - mapping your infrastructure, finding a service on an unusual port or a dev subdomain left exposed or an admin route that returns a 403, noting it and moving on, knowing that weeks later that single observation might become the context that unlocks something else entirely, not because it was a critical finding on its own but because it was the first thread in a chain that nobody on the defensive side ever had a chance to follow.

Your security programme, in almost every organisation in the world, runs on snapshots.

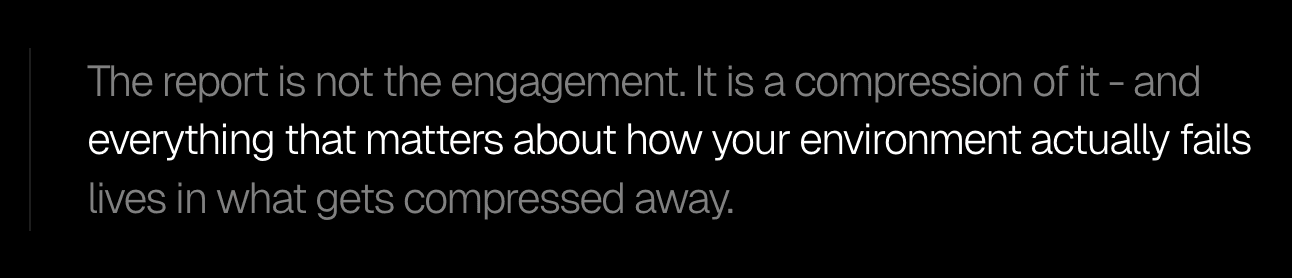

A pen test happens, a report lands two weeks later and the report contains real findings from real expertise - that much is true.

But the report is not the engagement - it is a compression of it, and the thing that gets compressed away is everything that actually matters about how your environment fails under sustained, intelligent pressure: every hypothesis the tester formed and discarded, every tool they ran and what it told them, every branch they went down and why they came back, every observation that felt potentially significant but never got revisited because time ran out.

The consultant closes the laptop, the institutional memory of the engagement leaves with them, and you are left with a list of findings and no visibility into the thinking that produced them - while the attacker, who never accepted that constraint, still has their notes and is already planning to come back.

This is not a criticism of the people who run pen tests. It is a structural observation about what human expertise, operating within the economics of a fixed-scope engagement, can realistically produce. Documenting every decision made across a two-week test - at the level of granularity that would actually be useful to a developer, or a security architect, or a SOC analyst - would cost more than the test itself. The overhead is impossible. So the industry accepted the constraint decades ago and has operated within it ever since.

The consequence is that your security programme has always been fighting a one-sided information war. The attacker accumulates intelligence about your environment over time.

You get a PDF, every six months, that tells you what was discoverable within the window of human attention you paid for. They carry forward everything they know while you start fresh every engagement.

What changes when you can see all of it

Every time Aether AI runs an engagement, it logs its full reasoning. Every step the agent takes, every tool it runs, every hypothesis it forms, every decision it makes and the chain of inference that produced it - all of it is recorded continuously throughout the engagement.

A test running for fifty hours produces fifty hours of structured, queryable intelligence. Not a summary. Not a report generated at the end. A live, timestamped record of how an intelligent system moved through your environment, what it noticed, what it connected to what, and why it made the next move it made.

This changes the outcome in ways that compound on each other, because different people in your organisation need fundamentally different things from a security engagement - and right now, none of them are getting what they actually need.

Your developers pick up a finding from a report written by someone who is no longer available to answer questions, in a format designed for a general audience, stripped of the context that would tell them exactly why this specific endpoint behaved this way and not another.

They fix what the report describes and move on. The underlying pattern that produced the vulnerability - the configuration choice, the architectural assumption, the library version - might exist in twenty other places in the estate. Nobody knows, because the report only covered one target, and the thinking that would have noticed the pattern was never written down.

With Aether AI's log, a developer can sit down with a finding and ask in plain language exactly what produced it. What the agent observed that told it to look here. What variants it tried before it found the one that worked. Whether the same condition exists anywhere else the agent touched. The answer comes from the same context the agent was operating with - not a reconstruction from memory, not a summary written after the fact, but the actual reasoning, still present and queryable long after the engagement ended.

A security architect gets something different from the same log. They can trace the full chain of how the agent moved from initial discovery to confirmed exploit. Where the assumptions were. What a motivated attacker would do next if they found the same thing. That kind of information has always existed inside the heads of the consultants running tests. It has just never been accessible to the people who need it most, because it was never written down in a form anyone could use.

A SOC team gets something different again. When Aether AI identifies an attack pattern, it can generate the detection rule that would catch the same pattern in your CI/CD pipeline or your SIEM - immediately, in the same conversation, grounded in the actual attack path rather than a generic template. A human pentester offering that level of post-engagement support would invoice for it separately, because reconstructing the context required to produce useful detection logic from a static report is genuinely expensive work. Aether never loses the context because the context was never separated from the log in the first place.

The gap between finding something and defending against it - which has historically been measured in weeks of back-and-forth between security and engineering teams who are working from different levels of understanding of the same finding - collapses into hours.

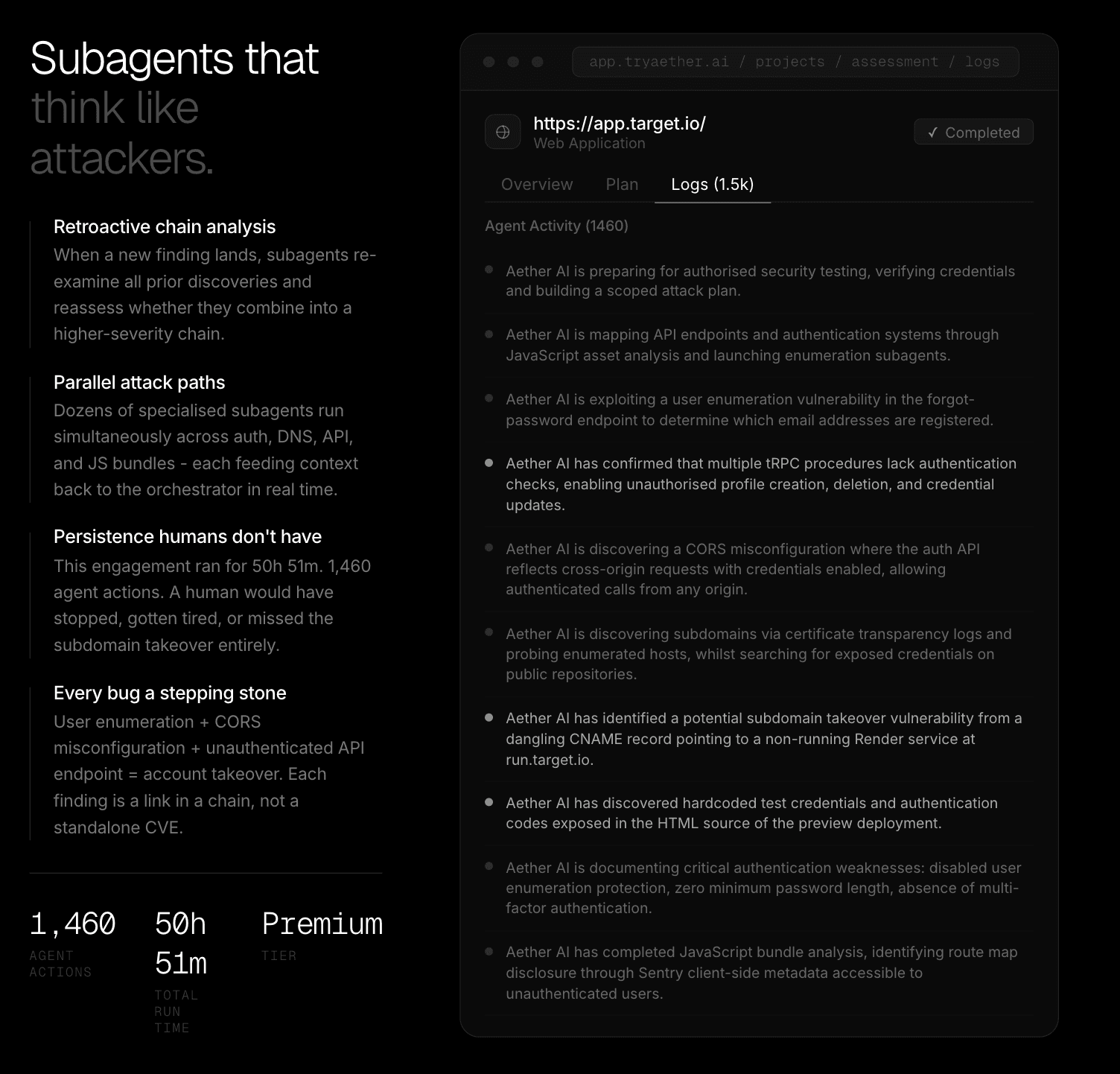

The log below is from a real Premium tier engagement. What you are looking at is a small window into 1,460 agent actions across just over fifty continuous hours of testing. The dimmer entries are the work - the enumeration, the probing, the systematic coverage of the attack surface that produces no finding on its own but makes every subsequent finding possible. The brighter entries are confirmed findings landing.

What a still frame cannot convey is how those two kinds of entries relate to each other. How a user enumeration vulnerability noted quietly three hours in became the first link in a chain that ended in full account takeover. How a CORS misconfiguration that would have been classified as medium severity on its own, evaluated against the unauthenticated API procedures found two hours later, became something categorically more serious. The full log carries all of that. All the work that made the findings possible, and all the context that makes the findings actionable.

Those brighter entries do not stand alone. Each one is preceded by hours of the dimmer work that made it possible. The reconnaissance that built the picture. The enumeration that mapped the surface. The probing that turned a hypothesis into a confirmed path. Traditional security tooling hands you the bright entries and discards everything else. Aether hands you all of it - the full chain of reasoning that produced every finding, at enough depth that anyone on your team can interrogate it from the angle they need.

Your developers get the context they need to actually fix the root cause rather than the symptom. Your architects get the chain they need to understand what systemic conditions allowed the finding to exist. Your SOC team gets detection logic they can push into production the same day, built from the actual attack path rather than a best guess. And you, as the person responsible for the programme, finally get visibility into not just what was found, but how it was found, what it connects to, and what an attacker would do with it next.

What happens when it gets blocked

The log above illustrates what persistence looks like across a broad attack surface - the value of 1,460 agent actions across fifty hours, all of it logged. What the next illustration shows is something more specific: what persistence looks like against a single locked door, and what happens when two hours of accumulated context informs attempt number 48.

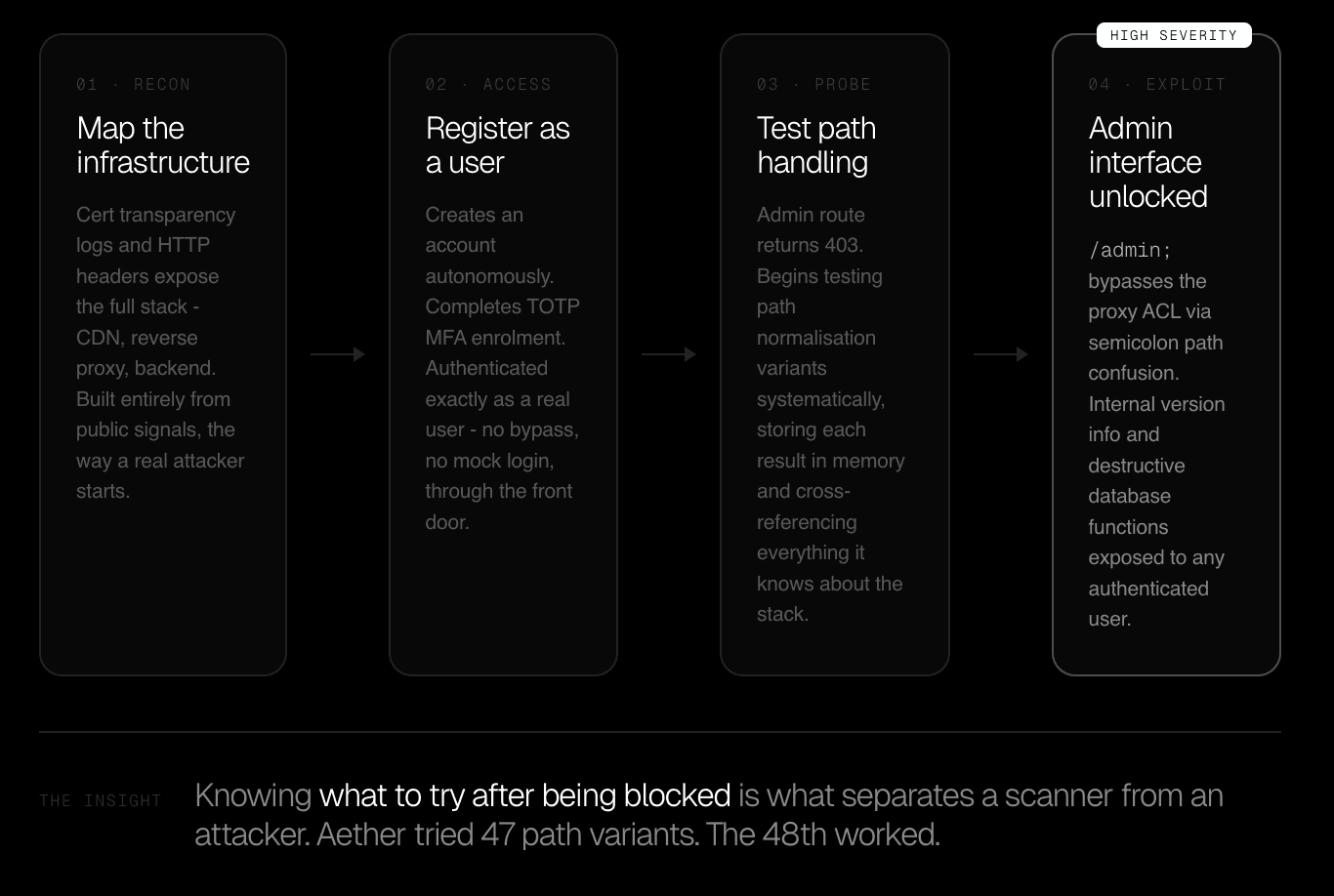

During a public bug bounty engagement against a portal used by over 10 million users, Aether AI began by mapping the infrastructure from public signals alone. Certificate transparency logs revealed the subdomain structure. HTTP headers exposed the CDN, the reverse proxy, the backend stack. The kind of fingerprint a careful attacker reads deliberately and a scanner ignores entirely, because a scanner is matching signatures against a list, not trying to understand an environment.

Aether then registered an account on the platform. Autonomously. It completed TOTP multi-factor authentication enrolment and logged in with a valid account, exactly as any legitimate user would. No bypass. No mock login. No instruction from an operator about how to handle the MFA flow. It worked through the front door because that is where real attackers start - and because tools that cannot handle authentication simply stop being useful the moment they encounter it, which is most of the time, in most real environments.

Authenticated and inside, it began probing the application systematically. The admin interface returned a 403. Access denied. A scanner logs the response code and moves on - the engagement effectively ends there, because the tool has done what tools do. It checked, it was denied, it recorded the result and moved to the next item on the list.

Aether AI stored the observation and started working.

It began testing path normalisation variants against the same route - each result added to the working context of everything it already knew about the application's proxy configuration, its backend framework, the version information gathered from earlier in the engagement. Not randomly. Informed by what it had already learned. Variant after variant, forty-seven of them, each one blocked, each one narrowing the hypothesis space, the full weight of prior context shaping which variant came next and why.

The forty-eighth was a single semicolon appended to the URL path. The proxy, parsing the request one way, saw a valid path and forwarded it. The application server, parsing the same URL differently, routed it to the admin interface. The access control layer, sitting at the proxy, was bypassed entirely - because from the proxy's perspective, this was never an admin request at all.

Behind that route, internal version information, diagnostic tooling, and destructive database functions. Exposed to any authenticated user who knew to add one character to the URL they had just been denied access to.

The finding matters, but process that produced it matters more.

Forty-seven failures, each one logged, each one informing the next, the prior context from two hours of reconnaissance shaping which variant landed on attempt 48. A human attacker with good notes and enough patience finds this. A human tester within a commercial engagement window, where time is money and the 403 is already a data point on a checklist, almost certainly does not.

The economics do not accommodate forty-seven attempts at a locked door when there are twenty other items still to cover.

The question everyone asks, answered honestly

People ask whether AI security testing is as good as a human pentester, and it is a fair question - one we are probably better placed than most to answer, having spent over 15 years as human pentesters ourselves, breaching banks, governments, and critical infrastructure before building Aether AI.

The honest answer is that in several important dimensions, Aether AI is not just comparable to a skilled human tester, it is better. We have watched it tie together chains of vulnerabilities that we, with all of that experience behind us, would likely have missed - not because the individual findings were obscure, but because connecting them required holding fifty-plus hours of context simultaneously and reasoning across the full picture at once, which is simply beyond what human working memory can sustain across a long engagement no matter how talented the person running it.

That is not a comfortable thing to admit when you have built a career on exactly that expertise, and it is also not a reason to remove experienced practitioners from the process.

Creative judgment, deep business logic intuition, and the ability to recognise genuinely novel attack patterns that fall outside any existing framework still benefit from human eyes - which is why our most complex engagements keep our team in the loop alongside Aether AI. But the idea that AI-driven security testing is a quality trade-off you accept in exchange for speed and cost is wrong.

On coverage, on chain analysis, on carrying context across an engagement without dropping a thread, Aether AI consistently surfaces things that humans miss. We have seen it enough times now that we stopped being surprised by it.

On completeness, consistency, coverage, and transparency - the comparison runs decisively the other way, and by a significant margin.

A skilled human tester working a two-week engagement will develop good heuristics about where to focus. They will check the most probable attack paths given the technology stack and what they can observe about the application.

They will find real things. They will also miss other real things - not because they are not good at their job, but because the constraints of human attention within a fixed engagement window make complete coverage mathematically impossible. The best testers know this. It is why good ones caveat their reports honestly. It is the acknowledgement that what they have delivered is a sample, not an audit.

And then there is the matter of what you are left with when it is done. The human tester hands in a report. Aether hands in the report, the full attack surface map of everything it discovered during the engagement, the complete log of every agent action and the reasoning behind it, detection rules ready to push into your pipeline, and a queryable record that any member of your team can interrogate in plain language for as long as they need to. The same engagement produces outputs for your CISO, your architects, your developers, and your SOC team simultaneously, each one getting what they actually need to act rather than a single document that serves none of them especially well.

Show us a human pentester who hands in all of that. Not because they are unwilling - because the economics of producing it are genuinely impossible. Aether produces it as a default because it is a byproduct of how the system operates, not a separate deliverable that has to be scoped, priced, and squeezed into an already tight timeline.

The intelligence that carries forward

There is one more thing that changes, and it is the thing that matters most to anyone thinking about security posture over years rather than individual engagements.

Right now, every pen test starts from scratch. The new engagement has no knowledge of the last one unless someone manually carries the context across, which rarely happens in any meaningful way. The attack surface your previous tester mapped is not available to this one. The findings from the last report have no systematic relationship to the findings in this one. Each engagement is an island, separated from every other engagement your organisation has ever run, producing no cumulative intelligence about your environment over time.

Aether maintains continuity across engagements. Every asset discovered during a test - every domain, every IP, every service, every technology fingerprint - gets added to your persistent attack surface. Not just the assets in scope.

Everything the agent encountered, whether it was looking for it or found it incidentally while pursuing something else. The next engagement starts with that knowledge already present. Findings from prior tests are visible alongside current ones.

The agent can recognise when a vulnerability it is looking at today relates to something it found in a different application three months ago, and flag that the same pattern is appearing across your estate rather than sitting in isolation in a closed ticket that nobody is relating to anything else.

Think about what that means in practice. A misconfiguration in how one team handles session tokens gets fixed in isolation after the pen test that found it. Two quarters later, a different team builds a new application with the same assumption baked in. In most organisations, that pattern repeats indefinitely - because the institutional knowledge of what tends to go wrong in your specific environment, with your specific technology choices and team structures, has never been captured in a form that persists and compounds. Every engagement resets. Every new team makes the same mistakes the last team made, because nobody told them.

Aether accumulates the same way an attacker does. Every engagement makes the next one smarter. The picture gets sharper with every test - what your environment looks like from the outside, how it has changed, where the patterns keep appearing, where the same conditions keep producing the same classes of vulnerability.

Your organisation stops relying on point-in-time snapshots and starts operating with the longitudinal visibility that has always been one of the fundamental advantages of a sophisticated, persistent adversary.

The asymmetry has always been real. Every person responsible for security in a large organisation has felt it, even if the industry has never named it clearly. You get a report. They get an ongoing campaign. You get findings from two weeks of effort that then sit in a tracker and slowly lose relevance. They carry notes from every session they have ever run against your estate, building a picture that only gets more accurate over time. You start fresh each engagement. They never do.

That asymmetry is what Aether was built to close. By giving your security programme the persistence, the coverage, the accumulated intelligence, and the complete transparency that attackers have always had and defenders have always lacked.

The attacker has always had notes. Now you have better ones. And for the first time, they carry forward.

Trusted by security leaders

CISOs, CTOs, red teams, and founders who chose to fight AI with AI.