Aether AI - The agentic attack AI that learns from every pentest so your defences improve at machine speed

10 min

The architecture behind a system that gets more dangerous with every engagement it runs.

The security industry has spent the last few years bolting language models onto scanners and calling it AI pentesting. The output is predictable: an LLM reads Nmap results and writes a report, a better interface to the same tooling the industry has always used. Nothing in the underlying model improves with use, nothing compounds, nothing learns. Every engagement starts from zero.

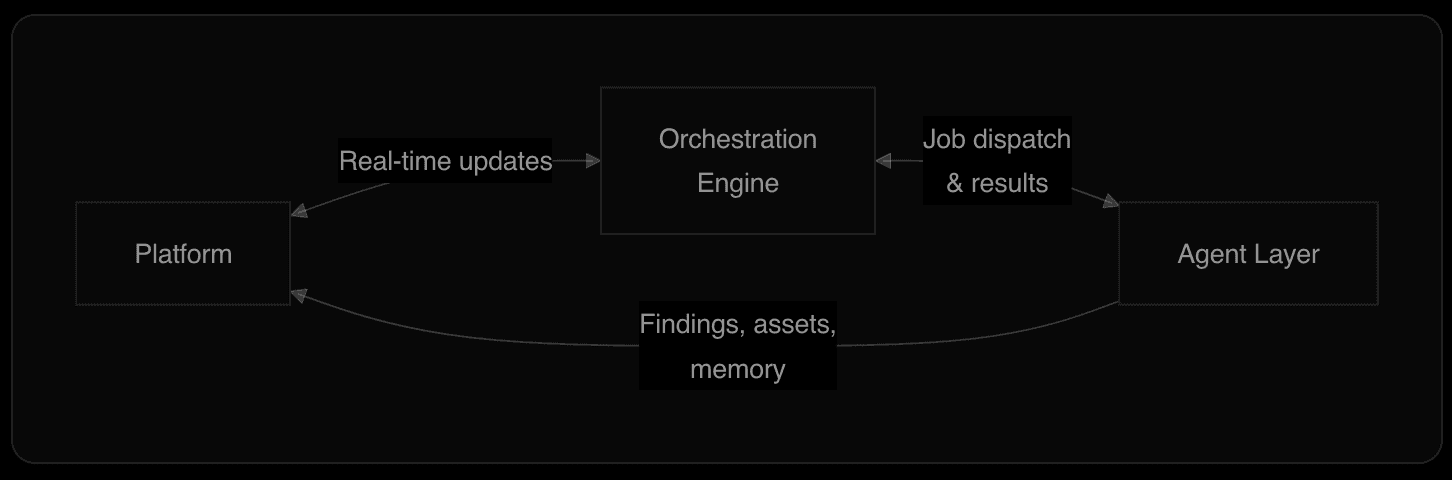

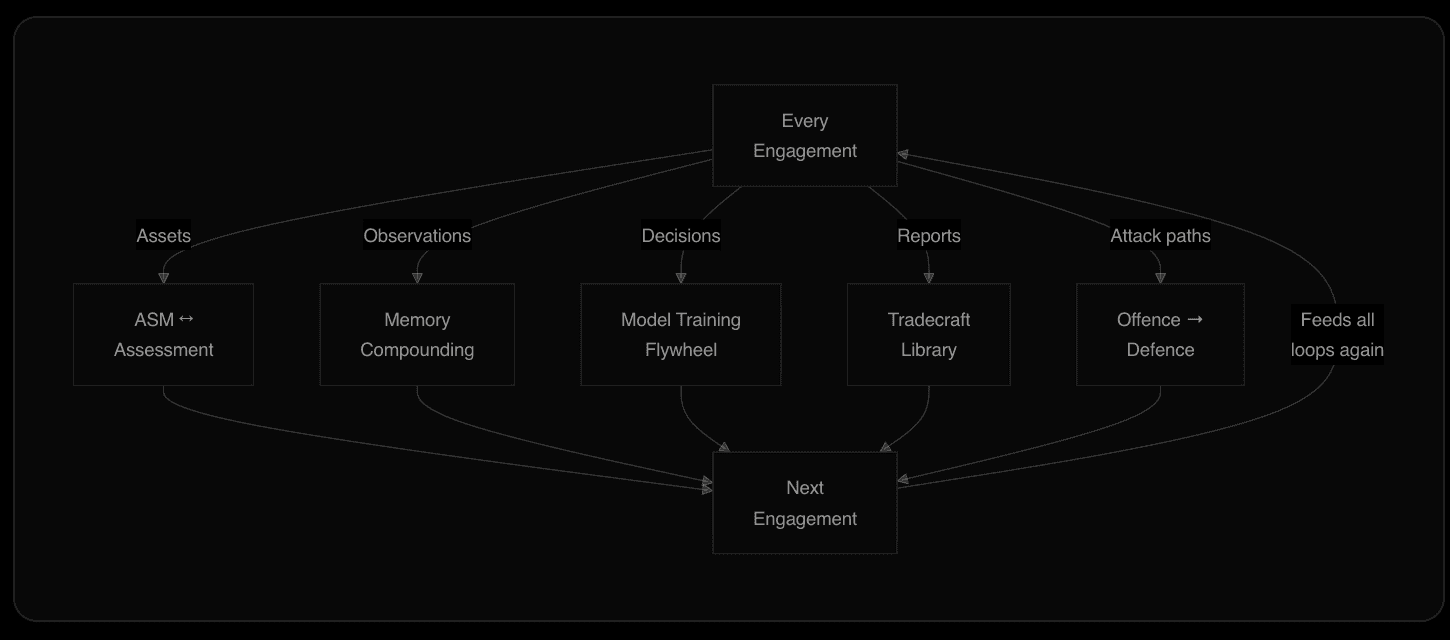

Aether AI is built differently - a closed-loop system where every component feeds every other component. Attack surface discovery informs penetration testing, penetration testing produces findings that refine attack surface understanding, and every decision the AI makes during an engagement is recorded, curated, and fed back into the offensive model through supervised fine-tuning. The system metabolises engagements.

Feedback loops make the architecture work. They produce a capability that compounds over time rather than plateauing.

Several layers, one intelligence

The platform manages projects, attack surface inventory, findings, and the conversational AI interface where customers interact with the same intelligence that ran their engagement. The orchestration engine drives the assessment lifecycle and coordinates multiple agents working in parallel. The agent layer is where attacks execute: a multi-agent system on dedicated offensive infrastructure with specialised tool servers and a three-model intelligence core.

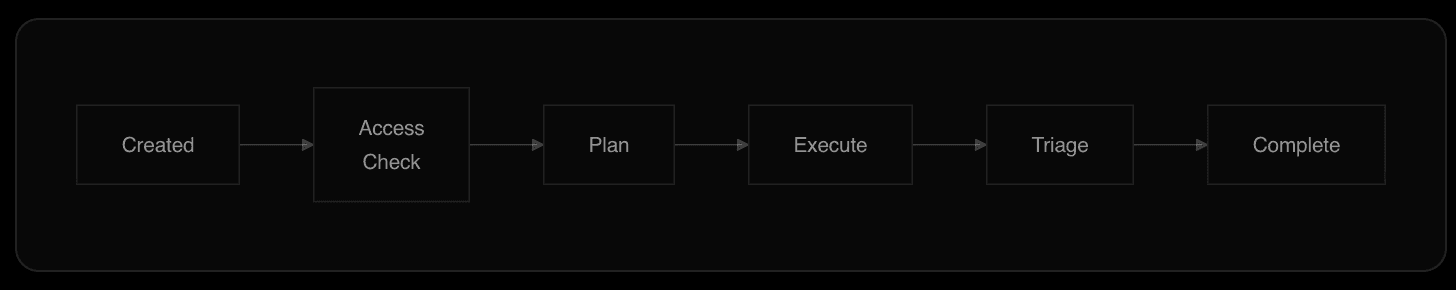

The assessment pipeline follows an event-driven state machine. Each transition is automatic - no human touches the pipeline unless they choose to.

Attack surface ↔ assessment

Most platforms treat attack surface management, penetration testing and red-teaming as separate products, keeping their inventories in separate databases that share a dashboard but little else.

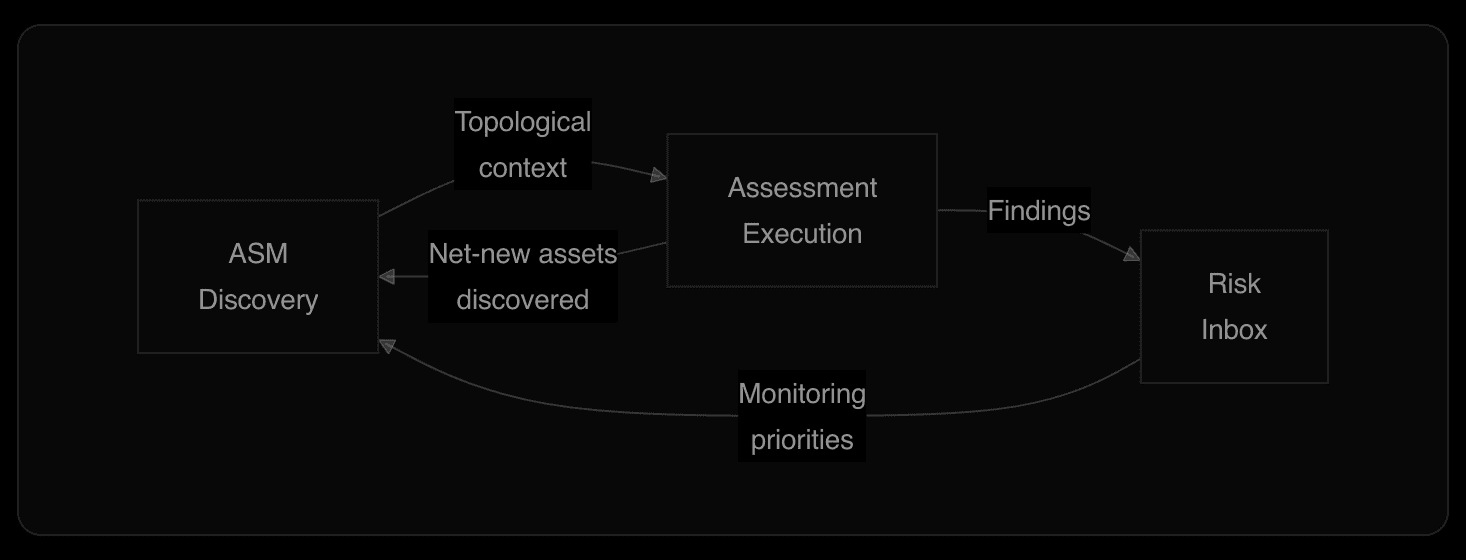

In Aether AI, the attack surface is key for every assessment, and every assessment feeds back into the attack surface. When a customer connects a cloud environment, Aether discovers and imports every reachable asset with full relationship mapping. When the agent launches, it starts from the full topological context that ASM already built.

During execution, the agent discovers things ASM did not. Internal API endpoints exposed in JavaScript bundles. IP addresses not in the cloud inventory. Credentials that unlock access to systems ASM had no visibility into. Every discovery flows back into the attack surface in real time.

Compound effect: The attack surface gets more complete over time. Every future assessment starts from a higher baseline.

Memory that compounds across engagements

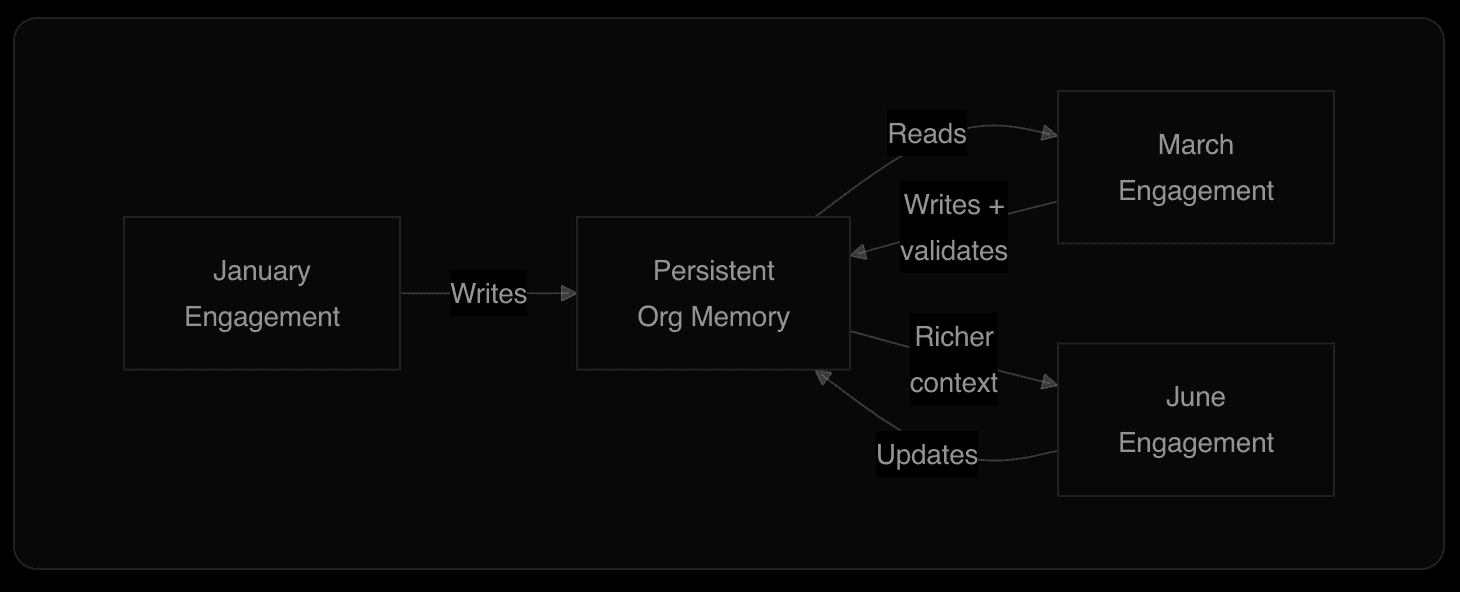

Every assessment produces a persistent knowledge base that is strictly isolated to that organisation. No customer's data, findings, memory, or intelligence is ever shared with or accessible to another customer. The isolation is enforced at the data layer - each organisation's knowledge store is completely siloed. What compounds is that organisation's own offensive and defensive intelligence, making Aether AI increasingly effective at both attacking and defending their specific environment over time - the same way you'd expect a skilled human to do so whether that be an adversary or your internal teams adapting to threats.

When the scanning agent discovers that an application uses a custom JWT implementation with a shared signing key across microservices, it writes that to memory. When a subagent documents that a particular WAF passes SVG-based XSS through, it writes that to memory.

On the next engagement, weeks or months later, the lead agent reads the memory index before building its plan. It already knows the architecture, the deployment cadence, which evasion techniques work. It does not waste time rediscovering what a previous agent already mapped.

Memory functions as hypothesis rather than settled fact. If a previous agent wrote that something was blocked, the current agent re-attempts. Defences change. A blocker from January becomes a starting point in March.

Compound effect: Every engagement deposits knowledge. Every future engagement withdraws it. Understanding deepens without human curation.

The loop that closes the gap frontier models can't

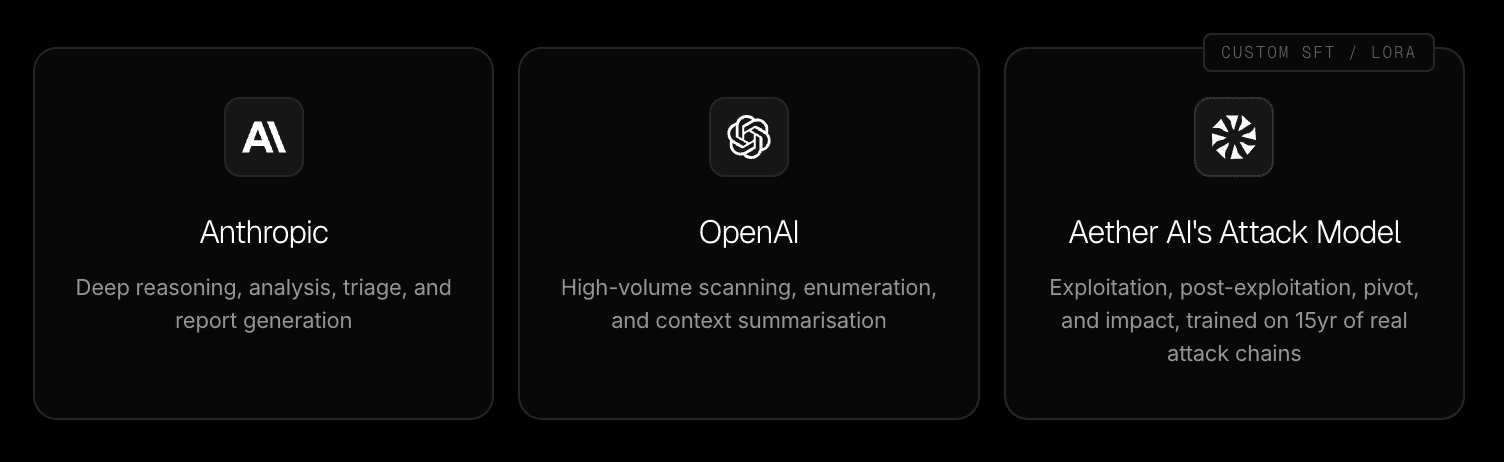

Aether AI runs a three-model architecture: two frontier models for general intelligence and reasoning, and a custom offensive model we built and continuously train for the tasks frontier models refuse or fail at.

Frontier models are extraordinary at reasoning, planning, and analysis, and Aether uses them for exactly that. The reasons they cannot do what Aether's attack model does are structural and compounding.

The training data problem

Frontier models are trained on the internet's security corpus: CVE databases, bug bounty reports, responsible disclosure write-ups. Every data source is built around the same incentive - find the vulnerability, report it, stop.

The MITRE ATT&CK framework maps 14 tactical phases of a real attack. Public training data densely covers the first three: Reconnaissance, Resource Development and Initial Access. The other 12 - Execution, Persistence, Privilege Escalation, Defence Evasion, Credential Access, Discovery, Lateral Movement, Collection, C2, Exfiltration, Impact - have almost no corresponding public data.

Those phases happen after the door opens, and the data from real compromises almost never makes it into the public record in full form. Occasionally an IR report surfaces - Mandiant or CrowdStrike might publish a redacted case study, a court filing forces disclosure. But these are the exception.

The structural incentives run the other way. Which can make sense if you consider how a detailed disclosure of how an attacker moved through your infrastructure is a share price event. Boards do not volunteer the exact privilege escalation path from a standard user account to domain admin. Legal counsel locks down forensic reports under privilege. Regulatory filings contain the minimum required detail. Sometimes there are explicit gag orders. The result is that the richest operational data in offensive security, the full post-exploitation tradecraft, stays locked in forensic vaults, classified government reports, and the records of the firms that legally conducted those attacks.

The safety guardrail problem

Even when frontier models have the latent capability to reason about exploitation, they are specifically trained to refuse. RLHF and constitutional AI alignment actively suppress offensive output. Ask a frontier model to generate a working exploit for a confirmed vulnerability and it will decline, suggest you contact the vendor, or produce a sanitised version that does not actually work. These guardrails exist for good reason, but they make the model useless for authorised offensive operations where proving exploitability is the entire job. This is particularly detrimental as finding deeper attack paths can be the difference between securing against a company ending attack vs. not being prepared.

The evidence

Anthropic demonstrated both problems simultaneously in their Mozilla Firefox security research. Claude found 22 vulnerabilities in Firefox in two weeks, 14 rated high severity, and over 500 zero-day vulnerabilities across open-source software. Finding bugs at that scale is genuinely impressive. But when they tried to turn those discoveries into working exploits, they ran several hundred attempts, spent $4,000 in API credits, and succeeded exactly twice. Both exploits only worked in testing environments with security features stripped out.

Their own conclusion: "Claude is much better at finding these bugs than it is at exploiting them." They acknowledged this gap is unlikely to last very long, but also that when frontier models do gain exploitation capability, they will need additional safety constraints to prevent misuse. The better they get at attacking, the more restricted they become. That is the structural ceiling.

Why Aether AI's model exists below that ceiling

We used supervised fine-tuning and LoRA to train a purpose-built offensive model on 15 years of full attack chains directly from the experience of Aether AI's co-founders. Legal compromises of governments, banks, casinos, and critical infrastructure spanning every phase from initial access through to impact. This model generates working exploits, executes post-exploitation tradecraft, pivots through networks, and demonstrates real impact - purpose-built for authorised offensive operations, deployed only within the platform, against targets where the customer has provided explicit authorisation. The safety boundary is the platform itself.

But the first version was just the seed. Here is where the flywheel starts turning.

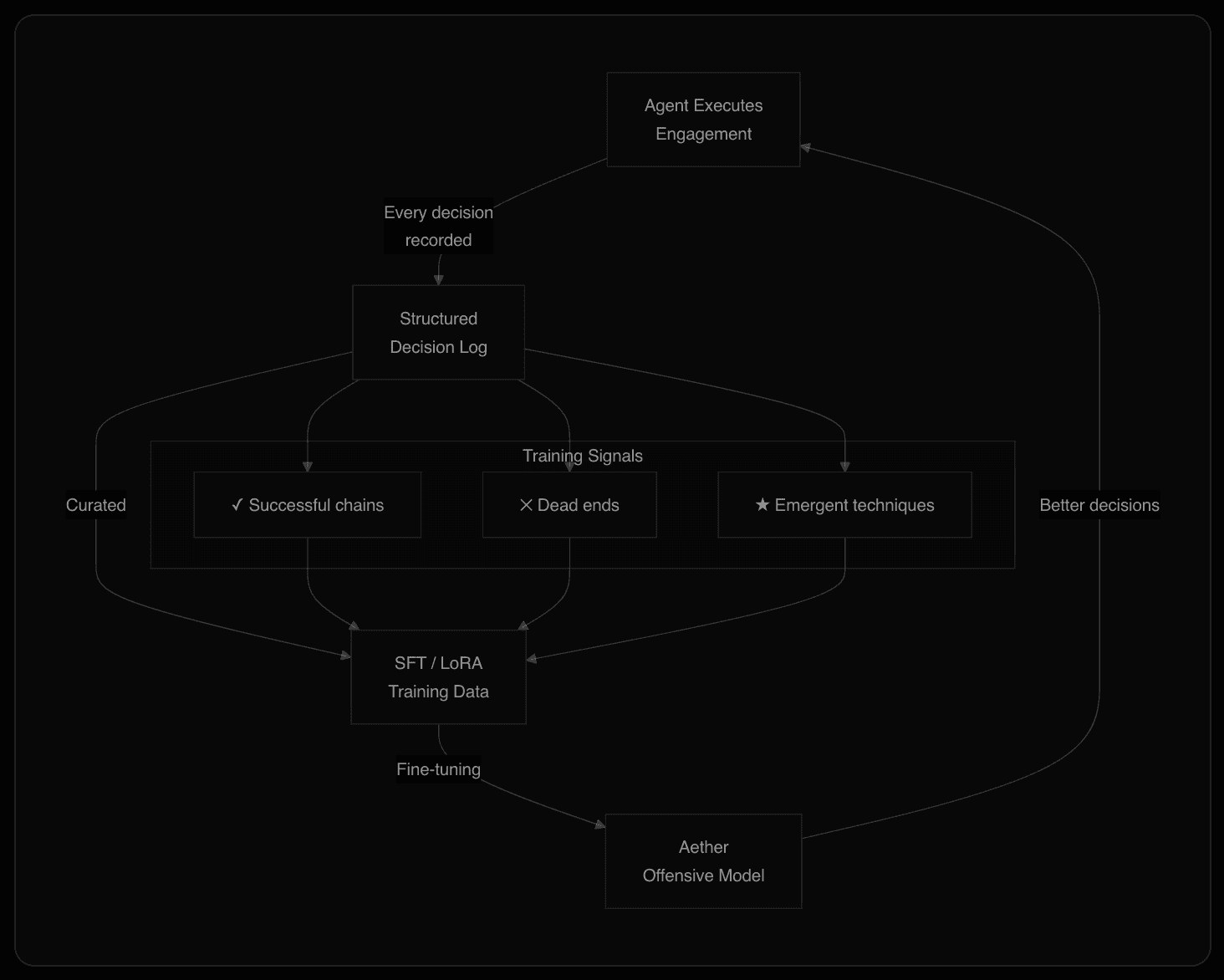

Every engagement generates new training data

The system records every decision the agent makes - every tool invocation, every payload selected, every pivot attempted. That decision data is curated and fed back through SFT and LoRA. Successful attack chains become positive examples. Dead ends become negative examples. Emergent techniques the agent discovers in the field become the most valuable examples of all. Critically, the training pipeline uses generalised technique and decision-pattern data. No customer-specific information, target identifiers, or findings data ever enters the training set. Each customer's data remains entirely isolated. What the model learns is how to attack better, not what it found in your environment.

Compound effect: Every engagement makes the model more capable. Frontier models train on static public data. Aether's model trains on live operational output that grows daily. The gap widens with every assessment run.

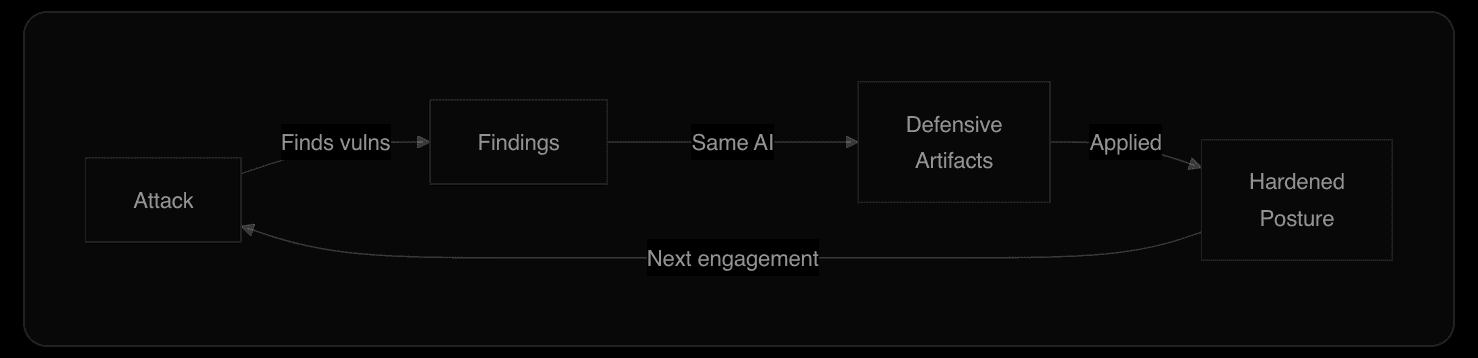

Offence informs defence

The same AI that compromised the environment helps defend it. Customers interact directly with the intelligence that ran the attack - the same reasoning, the same contextual understanding of their specific environment. It produces detection rules for their SIEM, DevSecOps pipeline configs, and hardening guidance specific to their stack, each drawn from the attacks that actually succeeded.

Compound effect: The next engagement tests the hardened posture and finds the gaps that remain. The attacker and defender are the same AI.

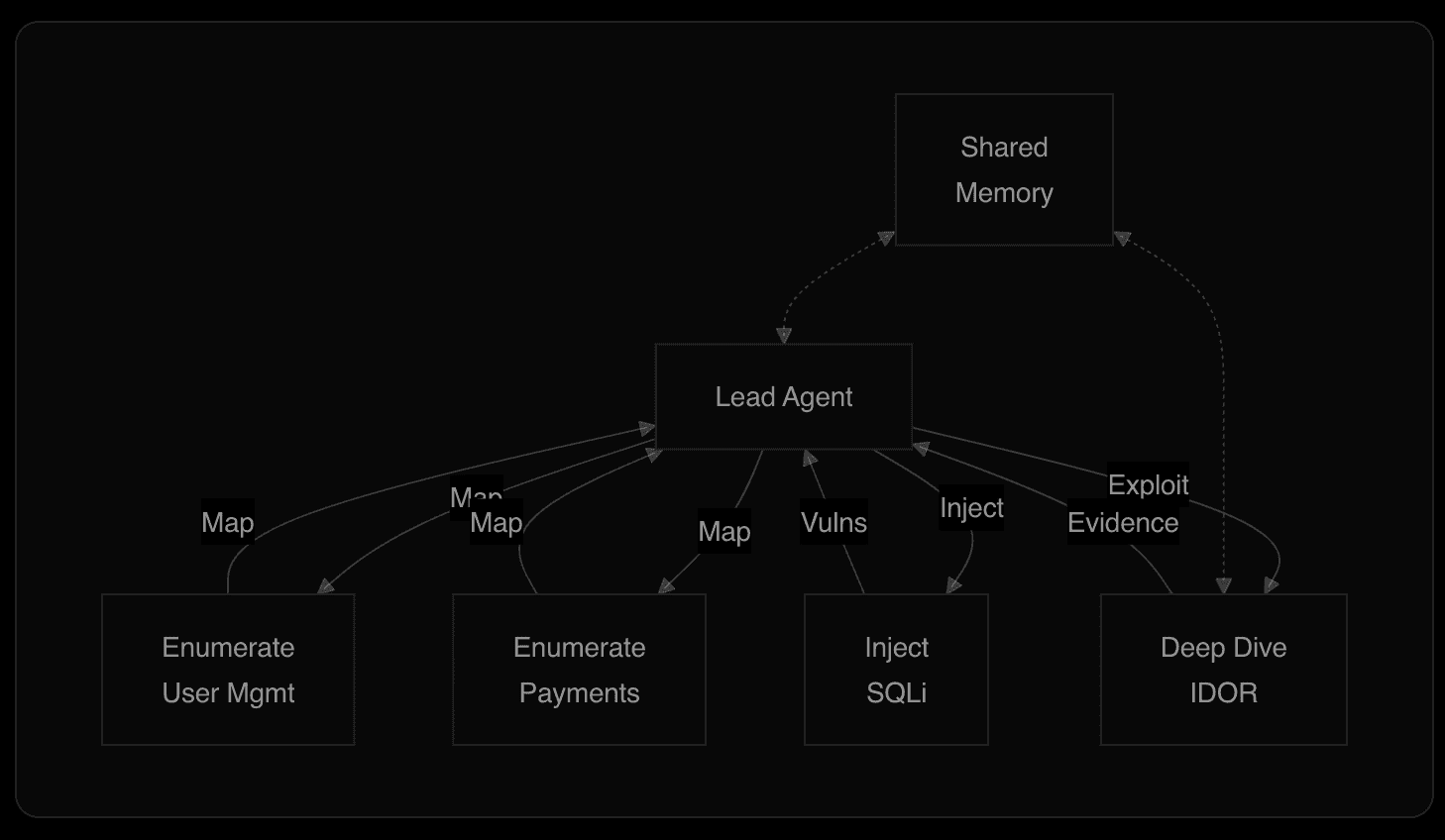

How the attack executes

The agent layer runs as a coordinated team. A lead agent manages strategy. Enumerate agents map functional areas in parallel. Deep-dive agents take a specific finding and fully exploit it. Injection specialists handle systematic payload delivery. All share unified memory.

A fourth model runs continuously handling context summarisation, compressing completed work during multi-day engagements without losing critical detail. This enables 50+ hour engagements without context degradation.

What this actually looks like

On a recent assessment against a global enterprise companies attack surface (52 in-scope hosts), the agent produced 567 documented activity entries over a continuous multi-day engagement. Each entry is a strategic decision about what to do next and why.

Parallel enumeration at machine speed

Within 15 minutes, the lead agent launched parallel enumeration across nine targets simultaneously. By the 1-hour mark, it had consolidated 70+ discovered endpoints, having already identified exposed API schemas with 553 routes, payment integrations across four providers, and pre-release staging infrastructure publicly accessible.

Research before attack

Before testing a single endpoint, the agent queried the report library for similar technology stacks. When it found IBM software running in the environment it researched IBM-specific attack vectors from prior engagements. When it discovered PingFederate SSO infrastructure, it pulled historical findings on OIDC misconfigurations. Every test was informed by operational history before a payload was sent.

Discovery chains into discovery

Hardcoded API endpoints in client-side JavaScript led to an unauthenticated feature flag API. That led to testing input validation, which revealed NoSQL operator injection. Separately, certificate transparency enumeration surfaced 800+ subdomains, which led to staging environments, which exposed Sentry DSNs and inline configuration, which revealed internal infrastructure topology. Each finding opened new attack surface that the agent immediately pursued.

Deep dives on high-value targets

When the lead agent discovered an attack vector against the organisations WAF, it spawned a deep-dive agent that tested variations, confirmed the bypass reached the targets backend, enumerated over 100,000 data entries, and documented the complete exploitation chain. The lead agent continued other targets in parallel.

Cross-surface chaining

The agent worked across every host as a connected estate. CORS misconfigurations on one domain were tested against credentials from another. Internal IPs decoded from load balancer cookies were correlated with infrastructure exposed through staging configuration leaks. Azure Blob Storage accounts discovered through CSP headers on admin portals were probed for public access, and one was found containing personal identifiable information downloadable without authentication.

Self-correcting and deduplicating

The agent continuously triaged its own output. Duplicates were merged. Inaccurate reproduction steps were corrected. Severity was reclassified when evidence warranted it - the Azure storage finding escalated from low to high after confirming actual data exposure, an OAuth vector downgraded after confirming remediation.

Concurrent report generation

While the lead agent tested, dedicated report-writer agents ran in parallel - researching vendor-specific remediation, validating documentation URLs, producing formal findings with hardening steps specific to the exact environment. When it found deprecated TLS on WAF related hosts, it documented the exact dashboard path and API method to enforce TLS 1.2.

The result: 50+ formally documented findings across infrastructure disclosure, authentication weaknesses, WAF bypass, cloud storage exposure, and application misconfiguration - produced autonomously, each with complete reproduction steps, vendor-specific remediation, and validated evidence. The agent filed, triaged, deduplicated, and corrected its own work throughout. This is an operation.

Where it exceeds human operators

The obvious comparison is that Aether AI operates like an advanced human red team - and it does. But the comparison only goes so far. In several critical dimensions, the system is structurally better than human operators, because the architecture enforces a consistency that human attention cannot sustain (we'll be releasing public research to back this up in the near future).

Nothing gets skipped

A human operator running a 824-item checklist against 52 hosts will, by hour 30, start making judgment calls about what to skip. Low-severity items get deprioritised. The seventh staging environment gets a lighter pass than the first. This is human attention economics. The agent operates without that constraint - it runs every checklist item against every applicable host with the same rigour at hour 50 as it did at hour 1. In the engagement above, the agent documented an expired TLS certificate on a legacy redirect endpoint, build metadata in HTML comments across six hosts, and missing Permissions-Policy headers across 16 hosts - none critical in isolation, but all of them reconnaissance value for an attacker building a chain.

Informational findings are chain material

Human pentest reports routinely classify informational items as low-priority noise. CISOs skim past them. Developers ignore them. But a sophisticated attacker treats every piece of information as a potential link. An internal IP address leaked in a load balancer cookie is useless on its own. Combined with a staging environment exposing the same IP range, and a CORS misconfiguration allowing cross-origin requests with credentials, it becomes the first three links of an exploitation chain. The agent decoded F5 BIG-IP cookies to extract internal datacenter IPs, correlated them with infrastructure exposed through staging configuration leaks, and documented the relationship. A human tester might note the cookie decoding. They almost certainly would not cross-reference it against every other piece of infrastructure intelligence across 52 hosts.

Consistency at scale

The agent tested HTTP security headers across all 52 hosts - not a sample. It tested CORS on every endpoint that returned Access-Control headers - not just the first. When it found disposable email acceptance in registration, it immediately tested the same pattern across every other registration endpoint on the estate, confirming the weakness on five environments within minutes. A human team tests the first instance thoroughly and then "verifies" the rest with decreasing rigour.

No ego, no anchoring

Human operators anchor on their initial assessment. If a tester decides early that a target is well-defended, they unconsciously reduce testing intensity. If they spend four hours on a dead end, they are reluctant to pivot. The agent has no attachment to any hypothesis. When it confirms that restrictions block content modification via a HTTP method override bypass, it immediately downgrades the finding and moves to the next vector. No hesitation, no sunk cost.

Total recall across the entire engagement

By hour 40, a human tester has forgotten the specific response headers from host #7 at hour 3. The agent has not. When it discovered Azure Blob Storage account names in CSP headers on admin portals at hour 12, it stored that intelligence. When it reached cloud storage testing at hour 16, it immediately enumerated those exact accounts - and found one with public access containing PII. That connection across a 4-hour gap, linking a CSP header to cloud storage exploitation, is the kind of chain humans miss - working memory degrades over multi-day engagements in ways that compound across 52 hosts.

The point: The architecture eliminates the structural weaknesses of human-led assessments - inconsistency, attention fatigue, anchoring bias, working memory limits - while retaining adversarial reasoning and creative exploitation. The result is genuinely more thorough testing - nothing left to chance, nothing skipped for expediency, and nothing forgotten between Tuesday's reconnaissance and Thursday's exploitation.

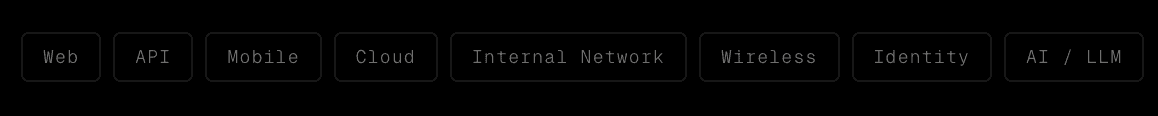

Eight surfaces, simultaneously

What makes this work is that all eight surfaces are tested by the same AI, in the same engagement, with shared context throughout. A leaked API key from mobile testing is immediately used against the cloud environment. A credential from web exploitation is tested against the internal network. The chain is the product.

Why the loops matter more than the components

Where competitors are optimising individual components of a linear process - a better scanner, a smarter agent - this architecture is built entirely around feedback loops.

Each loop runs independently, all sharing the same data substrate - every engagement feeds every loop simultaneously. The compound effect multiplies rather than accumulates.

A competitor can replicate any individual component. They cannot replicate the 15 years of operational experience that seeded the loops, and they cannot replicate the compounding effect of thousands of engagements that have already passed through them.

The system gets more dangerous every day it runs, and so do you. That is the architecture.

Aether AI

The world's most dangerous attack AI, in your hands protecting you.

Trusted by security leaders

CISOs, CTOs, red teams, and founders who chose to fight AI with AI.