Static Analysis Is Not Mobile Pentesting. Here Is the Gap.

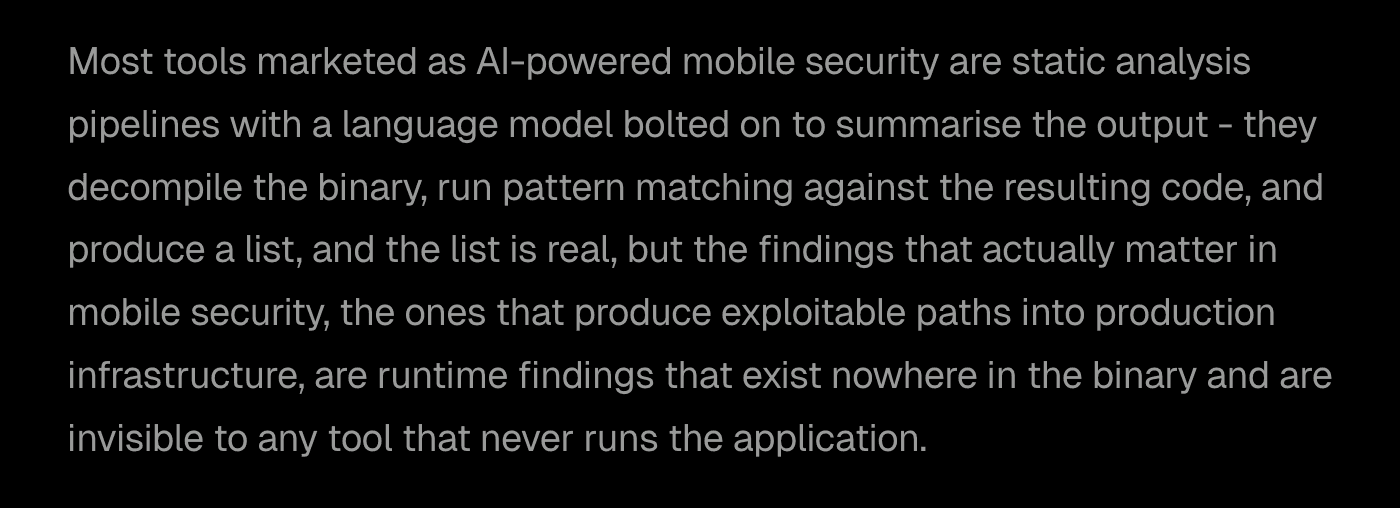

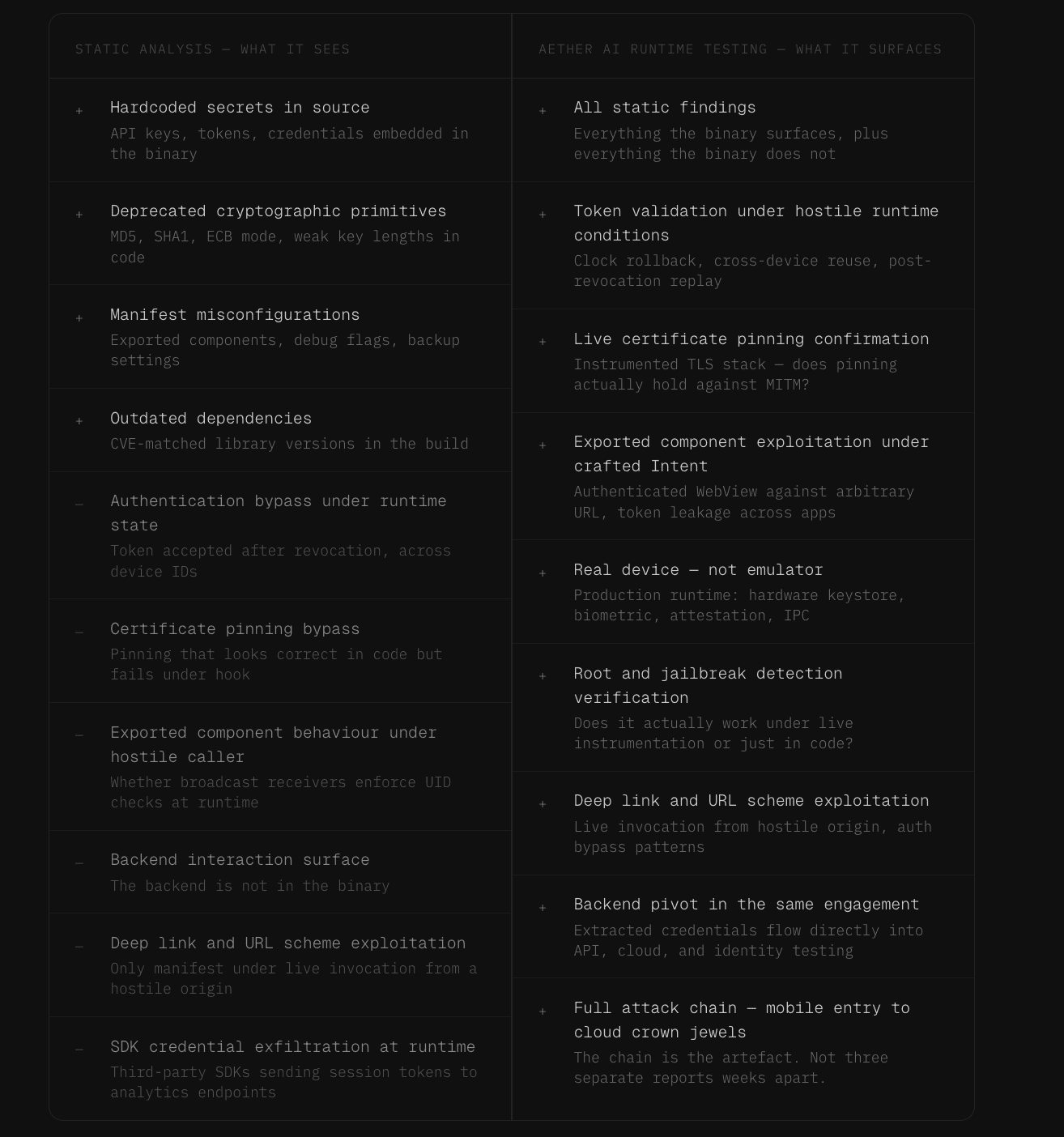

Static analysis catches things that are obvious from source code - hardcoded API keys, weak cryptographic primitives, insecure storage paths, deprecated dependencies with known CVEs - and that baseline is real and worth having, but what it structurally cannot catch is everything that depends on runtime state, and in modern mobile applications that is the entire class of authentication, authorisation, and session-handling vulnerabilities that constitutes the actual exploitable surface.

The backend is not in the binary. The device is not in the binary. The order, timing, and composition of operations under a hostile caller is not something any static tool can observe, and those are precisely the conditions under which the findings that matter most emerge.

What runtime testing surfaces that static never will

A worked example that recurs with enough regularity to be representative: a finance application stores authentication tokens in the iOS Keychain using the correct access control API, static analysis sees the call, finds nothing to flag, and marks the finding category clean - but the same application accepts that token across device identifiers, accepts it after the device clock has been rolled back, and accepts it long after the user revoked the session from a second device.

Three findings, all critical, none present in the binary, and a static report that would have assessed this application as well-implemented.

The same pattern holds across every category of runtime risk - certificate pinning that looks correct in code but is bypassed in seconds because the pinning logic sits in a function the developer assumed was opaque, deep link handlers that only manifest as exploitable when the application is invoked from a hostile context, WebView configurations that look clean in the manifest but allow JavaScript bridge invocation in a debuggable state, Keychain assignments that are correct at write time and catastrophic in deployment because a background process reads the data before the device authenticates. None of these findings exist in the binary and none of them are findable by any tool that never runs the application.

The Android runtime layer

Android presents a runtime surface that static analysis is structurally unable to characterise: exported components, broadcast receivers, and content providers can be statically inventoried, but their actual behaviour under invocation by a hostile caller - whether a broadcast receiver checks the calling UID, whether a content provider enforces access controls on the URIs it serves, whether an activity validates Intent extras - is only answerable by running the application against a hostile caller, and the pattern that surfaces consistently across engagements is an application that looks well-behaved in static review with an exported activity that, when invoked with a crafted Intent, accepts arbitrary deep links and triggers an authenticated WebView against any URL the attacker supplies.

Certificate pinning on Android has degraded into a class of finding that nobody catches statically, because naive implementations are obvious from code while non-naive implementations look correct in code and fail under a runtime hooking attack against the underlying TLS library or against the verification function at the Kotlin layer - and if the running application cannot be instrumented against an active man-in-the-middle to confirm that pinning actually holds, the test is checking that someone wrote a pinning function and assuming it works. Root detection and tamper detection follow the same pattern: apps that implement root detection frequently have detection logic that fails when invoked from an instrumented context, when the relevant string comparison is intercepted at runtime, or when the device has been re-signed with a debug certificate.

The iOS runtime layer

iOS presents a parallel set of runtime questions that static analysis cannot answer, and Keychain policy and file protection class assignments are among the most consistent sources of the gap: routinely correct in code and routinely catastrophic in deployment because of the interaction between the protection class assigned at write time and the device state at read time, such that an application storing sensitive material with the correct access control flag but reading it from a background process that triggers before the user authenticates exposes that data on a locked device in a way that static review marks as compliant and runtime testing exposes as a production vulnerability.

URL scheme handling is a runtime category by definition - the Info.plist registers schemes correctly, but what happens when the application is invoked via those schemes from a hostile origin is not in the code, it is in the runtime behaviour, and URL-scheme-triggered authentication bypasses, deep-link account takeover patterns, and JavaScript bridge invocations that only manifest under live invocation from another application or from Safari are consistently surfaced in runtime testing and consistently invisible to static review.

App Transport Security exceptions that look correct in the configuration file routinely permit downgrade attacks against domains the developer did not realise were transitively in scope through SDK calls, and pasteboard handling that statically appears normal routinely leaks credentials into the system pasteboard during specific runtime workflows that only become visible when the application is actually being used.

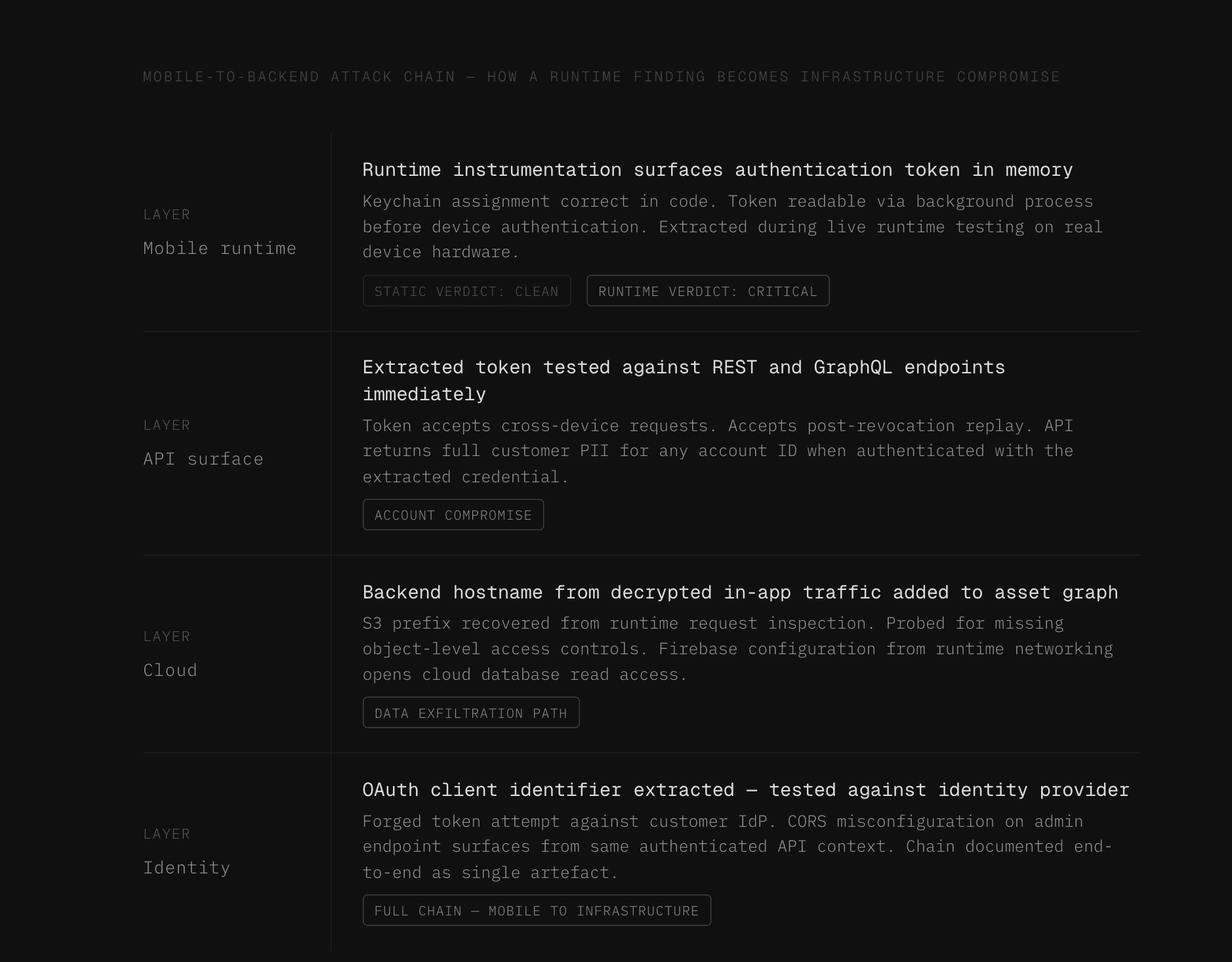

The mobile-to-backend pivot is where the blast radius lives

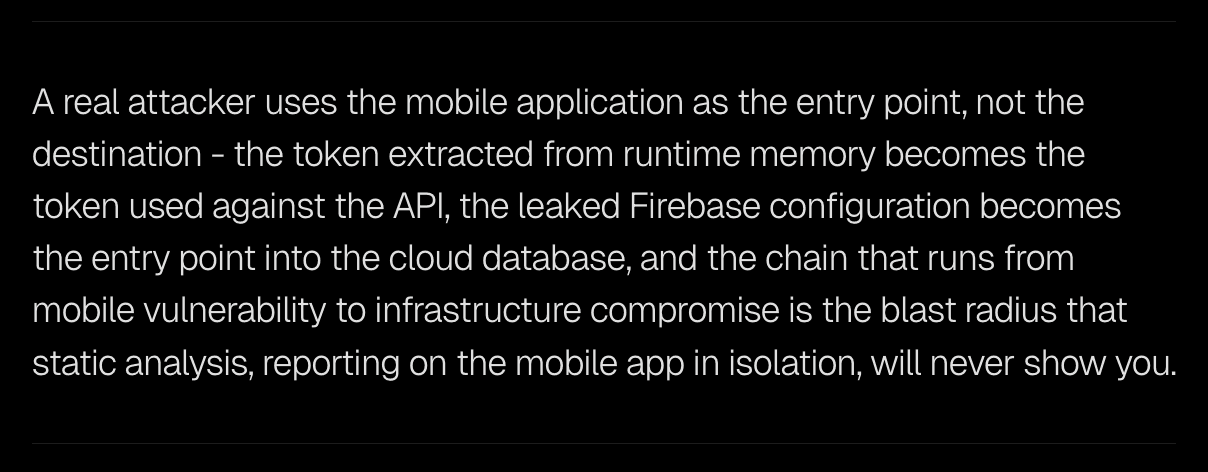

What makes the distinction between static and runtime testing consequential at the business level is not the mobile findings themselves but where those findings lead.

The token extracted from runtime memory flows immediately into API testing, the leaked Firebase configuration from runtime networking becomes the entry point into the cloud database, the hardcoded URL recovered from a runtime decryption routine becomes the host probed for misconfigured CORS, exposed admin endpoints, and unauthenticated GraphQL introspection in the same engagement, the OAuth client identifier extracted during runtime instrumentation becomes the identifier used to attempt forged tokens against the customer's identity provider, and the S3 prefix recovered from a runtime request becomes the prefix probed for missing object-level access controls.

A finding classified as minor insecure storage on a mobile application is not minor when the storage holds a refresh token that authenticates against an API returning customer PII for any account identifier, and the chain that connects those two findings - the mobile finding and the API finding - is precisely what a static tool reporting in isolation and a separate dynamic tool reporting in isolation, with a human analyst attempting to correlate them weeks later, will never produce.

Aether AI produces one chain - the credential extracted from the mobile runtime is in the API testing context within seconds, the cloud asset enumerated from that authenticated API call is in the asset graph within seconds of discovery, and the full chain from mobile entry point to infrastructure impact is a single artefact at engagement close.

Why "static plus dynamic plus manual" stitched together is not equivalent

The reasonable response to the static-only critique is that a combination of static analysis, dynamic testing, and human pentesting closes the gap, and many security organisations have implemented exactly that combination - but the result is not equivalent to integrated end-to-end testing, for two reasons that are structural rather than a matter of execution quality.

The first is that the integration is absent, while the static tool produces a list of code-level findings, the dynamic test produces a separate list of runtime findings, the human produces a third list of business-logic findings, and none of those lists joins, which means nobody on the engagement knows that the hardcoded constant the static tool flagged is the refresh token the human is testing against, which authenticates against the API the dynamic tool is fuzzing without credentials, and the chain that connects all three remains invisible because the data is never shared across the workstreams.

The second structural problem is tempo.

By the time the static tool has produced its report, the dynamic test has finished, and the human has filed their findings, the customer is reading three separate documents weeks apart and attempting to mentally correlate them, while an attacker moves from mobile entry point to cloud crown jewels in hours.

Aether's architecture eliminates both failure modes by running the same agent across all surfaces in the same engagement against shared context in real time, and the chain that took human penetration testing teams weeks to manually piece together emerges as a single artefact at engagement close.

What Aether AI's mobile testing actually does

Aether's mobile testing runs end-to-end on real physical devices through a dedicated mobile device gateway - not emulators, because emulator testing produces well-documented false negatives across jailbreak and root detection, biometric authentication, hardware-backed keystore behaviour, attestation patterns, and IPC behaviour that differs under virtualisation, none of which reflect what attackers actually target.

On real iOS and Android hardware, the agent installs the application, performs runtime instrumentation against the running process, hooks sensitive functions, intercepts cryptographic operations, observes how authentication tokens are stored and refreshed in live memory, bypasses certificate pinning where it exists, and intercepts the traffic the application emits to characterise the backend it talks to.

Every credential, token, URL, identifier, and configuration value extracted during mobile testing flows directly into the same engagement's backend testing, because the chain is the product and no point tool that never makes that connection produces it.

After a fix is deployed, the same agent retests the original chain unlimited times with captured evidence on every run: redeploy the application, run the same chain, confirm the chain breaks, attach the proof. Mobile retesting becomes a continuous loop rather than a quarterly re-engagement.

Static analysis was a reasonable proxy for mobile risk when attackers stopped at source code, and attackers stopped stopping at source code a long time ago.

Static-only mobile testing tells you that something might be wrong but not whether the wrong thing is exploitable, what it leads to, or how to close the chain.

End-to-end dynamic testing on real devices, with backend pivot in the same engagement and the same agent retesting in a continuous loop, is the work - anything less is reading the mobile half of an attack story and assuming that is the whole story.

See other articles

Trusted by security leaders

CISOs, CTOs, red teams, and founders who chose to fight AI with AI.